Safety

Featured matches

-

Trust scores, cryptographic proof, and risk assessment for AI agents.Open

Trust scores, cryptographic proof, and risk assessment for AI agents.Open

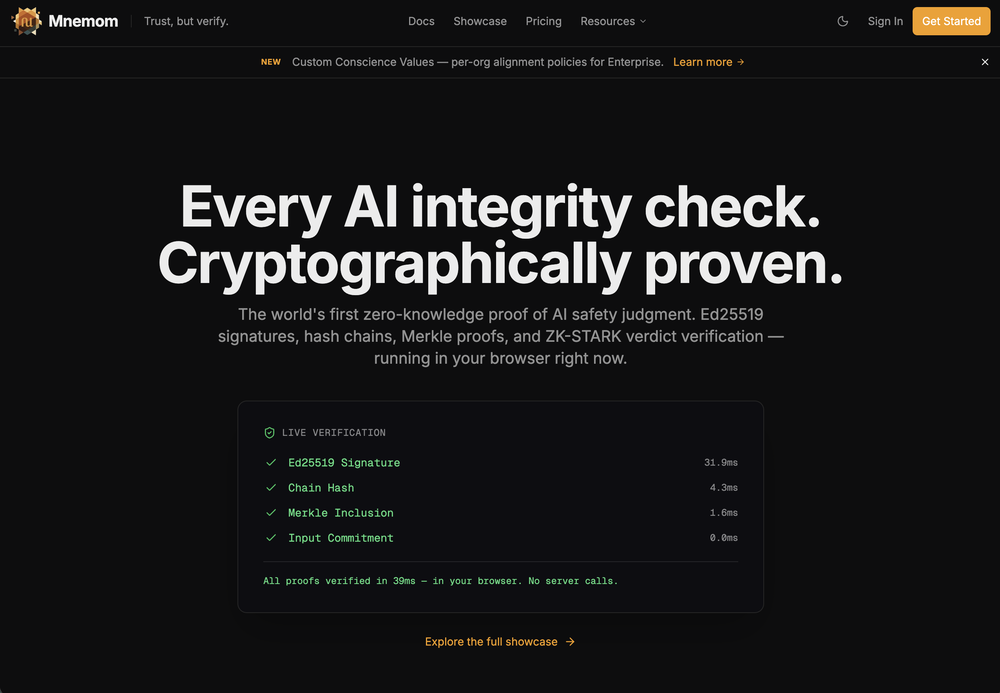

Alex Garden🛠️ 1 toolFeb 24, 2026@MnemomWe built Mnemom because we were shipping AI agents into production and couldn't answer a basic question: how do you prove this thing is doing what you told it to? Not monitor. Prove. So we built the proof layer. Cryptographic attestations, trust scores, risk assessment, containment — everything you need to deploy agents you can actually stand behind. It's free to start and open source. We'd love to hear what you think - new features shipping daily.

Alex Garden🛠️ 1 toolFeb 24, 2026@MnemomWe built Mnemom because we were shipping AI agents into production and couldn't answer a basic question: how do you prove this thing is doing what you told it to? Not monitor. Prove. So we built the proof layer. Cryptographic attestations, trust scores, risk assessment, containment — everything you need to deploy agents you can actually stand behind. It's free to start and open source. We'd love to hear what you think - new features shipping daily. -

Really smooth experience with Vivgrid, makes taking agents to production way easier.

Really smooth experience with Vivgrid, makes taking agents to production way easier. -

Gave it a try, before i used to use Calendly and manually tag them as important. Used some trackers on Appstore but this works just as well if not better, really like it!

Gave it a try, before i used to use Calendly and manually tag them as important. Used some trackers on Appstore but this works just as well if not better, really like it! -

-

-

Open

Verified tools

-

OpenGreat app. I had never tried anything so specific and professional. The advanced mode is really comprehensive, even though it is quite complex for beginners. It’s truly Gym Bro–level. For now, I’m testing the training mode, which is more accessible for me.

-

We built ModelRed because most teams don't test AI products for security vulnerabilities until something breaks. ModelRed continuously tests LLMs for prompt injections, data leaks, and exploits. Works with any provider and integrates into CI/CD. Happy to answer questions about AI security!

- Sponsor

MongoDB - Build AI That Scales🗄️ Database

MongoDB - Build AI That Scales🗄️ Database -

Falling behind on AI powered marketing? Hire AI agents and put your marketing on autopilot.