What kind of data can OpenDCAI/DataFlow's ready-to-use data synthesis and cleaning pipelines generate?

OpenDCAI/DataFlow's read-to-use data synthesis generates and cleans data in various formats such as text, math, and programming code.

What role does AI play in Data Preparation with OpenDCAI/DataFlow?

In OpenDCAI/DataFlow, AI is used to power operators that perform tasks such as data preparation and refining, noise reduction, source filtering, and large language models training.

How does OpenDCAI/DataFlow handle noise reduction in data?

Noise reduction in DataFlow is achieved through sophisticated AI-powered operators. They intelligently sift through the data, refining and filtering noisy data sources such as low-quality QA or PDFs, ensuring only high-quality data is utilized.

How does the source filtering feature in OpenDCAI/DataFlow work?

The source filtering feature in OpenDCAI/DataFlow helps in the selective processing of information from the data sources. It filters out less useful information, allowing for the focused and efficient handling of data.

How does the OpenDCAI/DataFlow tool aid in Data Refining?

Data refining in OpenDCAI/DataFlow is a process of improving the quality of data. This involves techniques like removing noise and errors, transforming and cleaning data to optimize the overall quality of datasets, which in turn enhances the model's training performance.

How does OpenDCAI/DataFlow create reproducible, reusable, and shareable pipelines?

OpenDCAI/DataFlow creates reproducible, reusable, and shareable pipelines through its operator-based design. This design approach allows users to encapsulate tasks in self-contained modules or operators, which can be combined in various ways to create task-specific pipelines. These pipelines serve as ready-to-use solutions that can be shared and reused across various projects.

How relevant is OpenDCAI/DataFlow in the Data-Centric AI community?

OpenDCAI/DataFlow is a significant tool for the Data-Centric AI community, providing a fundamental infrastructure that transforms the data cleaning workflow into a standardized, reusable, and reproducible process. It allows for dynamic creation and recombination of operators, catering to various data-centric tasks across different domains.

What types of data can OpenDCAI/DataFlow prepare?

OpenDCAI/DataFlow can handle a variety of data including PDFs, plain-text, and low-quality QA, transforming them into high-quality data ready for training models. It supports data synthesis and cleaning for text, math, and code data.

Are new operators created on demand with OpenDCAI/DataFlow?

Yes, new operators can be created on demand inside OpenDCAI/DataFlow to tackle specific data handling tasks. This flexibility allows users to construct pipelines tailored to their project requirements.

How are existing operators recombined in OpenDCAI/DataFlow?

Existing operators in OpenDCAI/DataFlow are recombined through the built-in DataFlow-agent. Using its dynamic pipeline assembly, the agent can select and merge different operators to form new pipelines as required.

How does OpenDCAI/DataFlow help improve the quality of language models?

OpenDCAI/DataFlow helps in improving the quality of language models through specialized training strategies like pre-training, supervised fine-tuning, and reinforcement learning training. The tool's ability to refine and generate high-quality data also contributes towards inducing robustness and enhancing the performance of large language models.

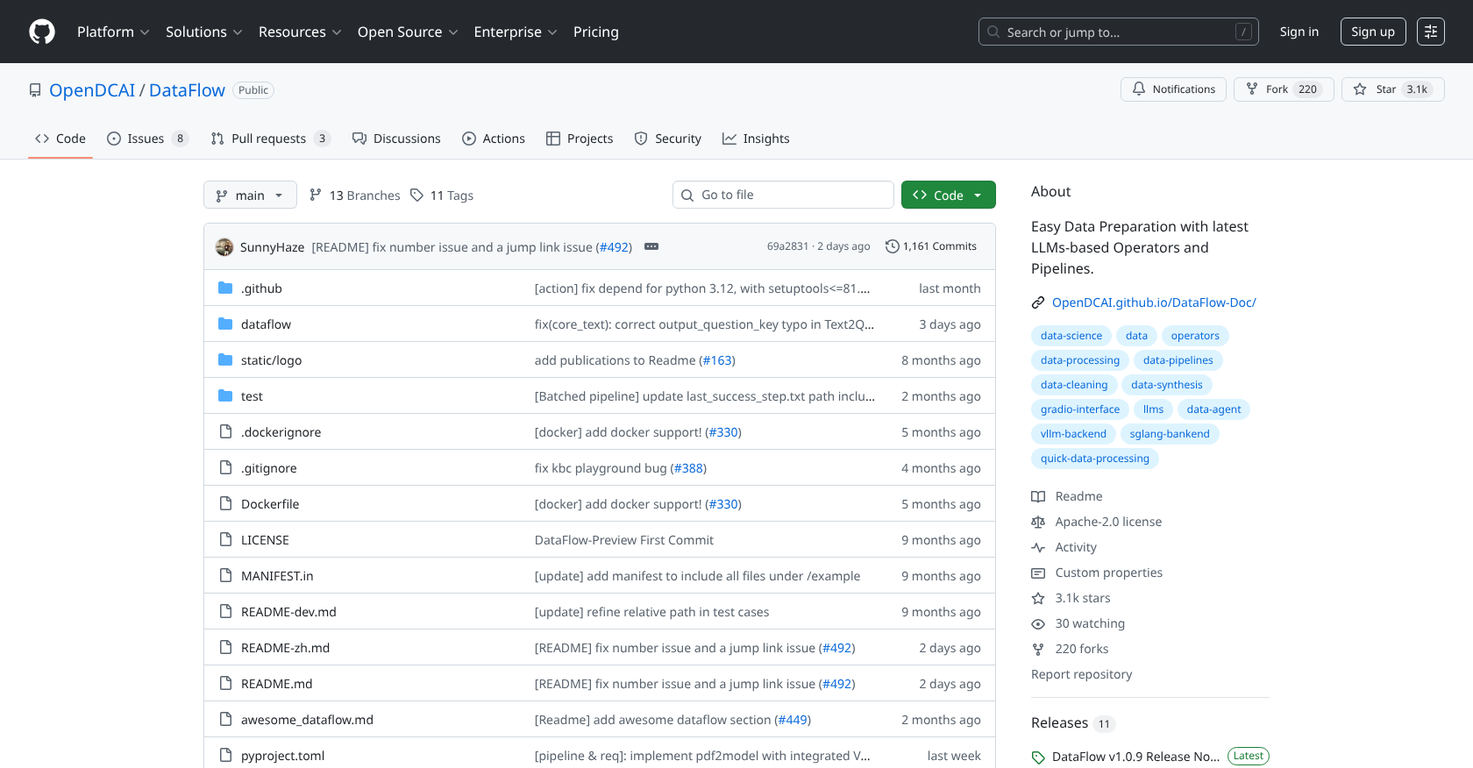

What does the GitHub page title mean by 'Easy Data Preparation with latest LLMs-based Operators and Pipelines'?

The GitHub page title refers to OpenDCAI/DataFlow's capability of providing easy preparation of datasets using state-of-the-art, user-friendly operators and pipelines designed around Large Language Models (LLMs).

Why should I use OpenDCAI/DataFlow for data preparation?

OpenDCAI/DataFlow provides a comprehensive solution for data preparation tasks, including generating, refining, evaluating, and filtering data from a variety of sources. The tool's operator-based design allows users to establish reproducible, reusable, and shareable pipelines, making it an effective tool for data preparation.

How does OpenDCAI/DataFlow handle challenging data sources like PDFs?

OpenDCAI/DataFlow can process challenging data sources like PDFs by generating, refining, evaluating, and filtering data from these noisy sources, turning them into high-quality datasets. Its operator-based design and built-in DataFlow-agent allow for flexibility and control in managing these complex data types, thereby producing ready-for-use data for training large language models.

What is the main purpose of OpenDCAI/DataFlow?

The main purpose of OpenDCAI/DataFlow is to facilitate comprehensive data preparation and training. It is designed to generate, refine, evaluate and filter high-quality data for AI systems from noisy sources, thereby helping to boost the performance of Large Language Models in specific domains.

What types of data sources can OpenDCAI/DataFlow process?

OpenDCAI/DataFlow can process a variety of data sources including PDFs, plain text, and low-quality QA. It is designed to filter noisy data and generate high-quality data for AI systems

Can OpenDCAI/DataFlow be used for specific domains such as healthcare or finance?

Yes, OpenDCAI/DataFlow is adjustable to function in a variety of domains, including healthcare and finance. It improves the performance of Large Language Models in these specific fields through targeted training.

How does OpenDCAI/DataFlow contribute to the improvement of large language models?

OpenDCAI/DataFlow contributes to the improvement of Large Language Models through focused training initiatives such as Pre-training, Supervised Fine-tuning and RL training. It helps in the preparation of high-quality LLM training datasets from raw data using its low-code pipelines

What makes the operator-based design of OpenDCAI/DataFlow significant?

The operator-based design of OpenDCAI/DataFlow allows it to transform the entire data cleaning workflow into a reproducible, reusable, and shareable pipeline. This design is significant as it enables OpenDCAI/DataFlow to dynamically assemble new pipelines by either combining existing operators or creating new ones based on demand.

How does OpenDCAI/DataFlow ensure reproducibility in data cleaning workflow?

OpenDCAI/DataFlow ensures reproducibility in data cleaning workflow by turning the entire process into a shareable pipeline. The intelligent DataFlow-agent included in the system is capable of dynamically assembling new pipelines by recombining existing or creating new operators on demand.

What is the functionality of the built-in DataFlow-agent?

The built-in DataFlow-agent in OpenDCAI/DataFlow acts as an intelligent assistant that can assemble new pipelines dynamically, either by recombining existing operators or creating new ones based on the specific requirements.

What kind of datasets can OpenDCAI/DataFlow generate?

OpenDCAI/DataFlow aids in generating high-quality Large Language Model training datasets from raw data. It supports the generation of text, math, and code data, and also offers data synthesis and cleaning pipelines.

How does OpenDCAI/DataFlow handle the conversion of PDF to QA?

OpenDCAI/DataFlow handles the conversion of PDF to QA through a featured tool that performs large-scale conversion. It enables extraction of high-quality data from large quantities of PDF files.

Can OpenDCAI/DataFlow execute structured data extraction?

Yes, OpenDCAI/DataFlow is equipped to execute structured data extraction. This is part of its extensive data preparation process which includes the conversion of PDFs to QA, handling of noisy data, and generation of different types of data such as text, math and code.

What is the role of AI-powered operators in OpenDCAI/DataFlow's data preparation process?

AI-powered operators in OpenDCAI/DataFlow serve as crucial components in the data preparation process. They help in generating, refining, evaluating and filtering high-quality data, facilitating successful and efficient data-centric AI operations.

What is 'flexible orchestration' in the context of OpenDCAI/DataFlow?

In the context of OpenDCAI/DataFlow, 'flexible orchestration' refers to the dynamic and customizable assembly of new pipelines. The built-in intelligent DataFlow-agent can recombine existing operators or create new ones based on need, offering a flexible, efficient, and personalized data preparation process.

Can OpenDCAI/DataFlow recombine existing operators or create new ones?

Yes, one of the key features of OpenDCAI/DataFlow is the ability to dynamically assemble new pipelines. The built-in DataFlow-agent can recombine existing operators or create new ones based on the specific demand, allowing for a highly effective and personalized data preparation process.

What are the key features of OpenDCAI/DataFlow?

The key features of OpenDCAI/DataFlow include ready-to-use data synthesis and cleaning pipelines, flexible custom pipeline orchestration, reproducible, reusable, and shareable Data-Centric AI system, and it provides comprehensive support for creating custom operators that are easily packaged and distributed.

What is meant by 'low-code pipelines' in OpenDCAI/DataFlow?

The term 'low-code pipelines' in OpenDCAI/DataFlow refers to its pipeline assembly feature which requires minimal coding. It signifies a user-friendly design that enables users to generate high-quality Large Language Model training datasets from raw data with ease.

How does OpenDCAI/DataFlow handle noisy data refinement?

OpenDCAI/DataFlow handles noisy data refinement through its comprehensive toolset for data preparation. It generates, refines, evaluates and filters data from noisy sources such as PDFs, plain texts and low-quality Question-Answer sets, resulting in high-quality data ready for AI applications.

What capabilities does OpenDCAI/DataFlow provide for academic research?

OpenDCAI/DataFlow provides capabilities for academic research through its ability to handle a wide range of data types and orchestrate them into high-quality datasets suitable for analysis. It can refine data from diverse sources, support data generation, including text, math, and code, ensure reproducibility of data cleaning workflows, and equip researchers with ready-to-use data synthesis and cleaning pipelines.

What does the Text2SQL tool do in OpenDCAI/DataFlow?

In OpenDCAI/DataFlow, the Text2SQL tool is a data generation tool that translates natural language questions into SQL queries. It aids in the creation of structured data, contributing to the high-quality training data generation.

What is the AgenticRAG tool used for in OpenDCAI/DataFlow?

In OpenDCAI/DataFlow, the AgenticRAG tool is used for data generation. It helps to identify and extract Question-Answer pairs from existing QA datasets or knowledge bases that require external knowledge to answer, making it helpful for downstream training of Agnetic RAG tasks.

What is the application of OpenDCAI/DataFlow in the legal domain?

OpenDCAI/DataFlow has applications in the legal domain due to its capacity to process, refine data and generate high-quality data from a variety of sources. The refined data can then be used to train legal-focused AI models, thus ensuring better accuracy and relevance in the legal field.

How would you rate DataFlow?

Help other people by letting them know if this AI was useful.