Tencent / NeuralNLP-NeuralClassifier

An Open-source Neural Hierarchical Multi-label Text Classification Toolkit

README

NeuralClassifier: An Open-source Neural Hierarchical Multi-label Text Classification Toolkit

Introduction

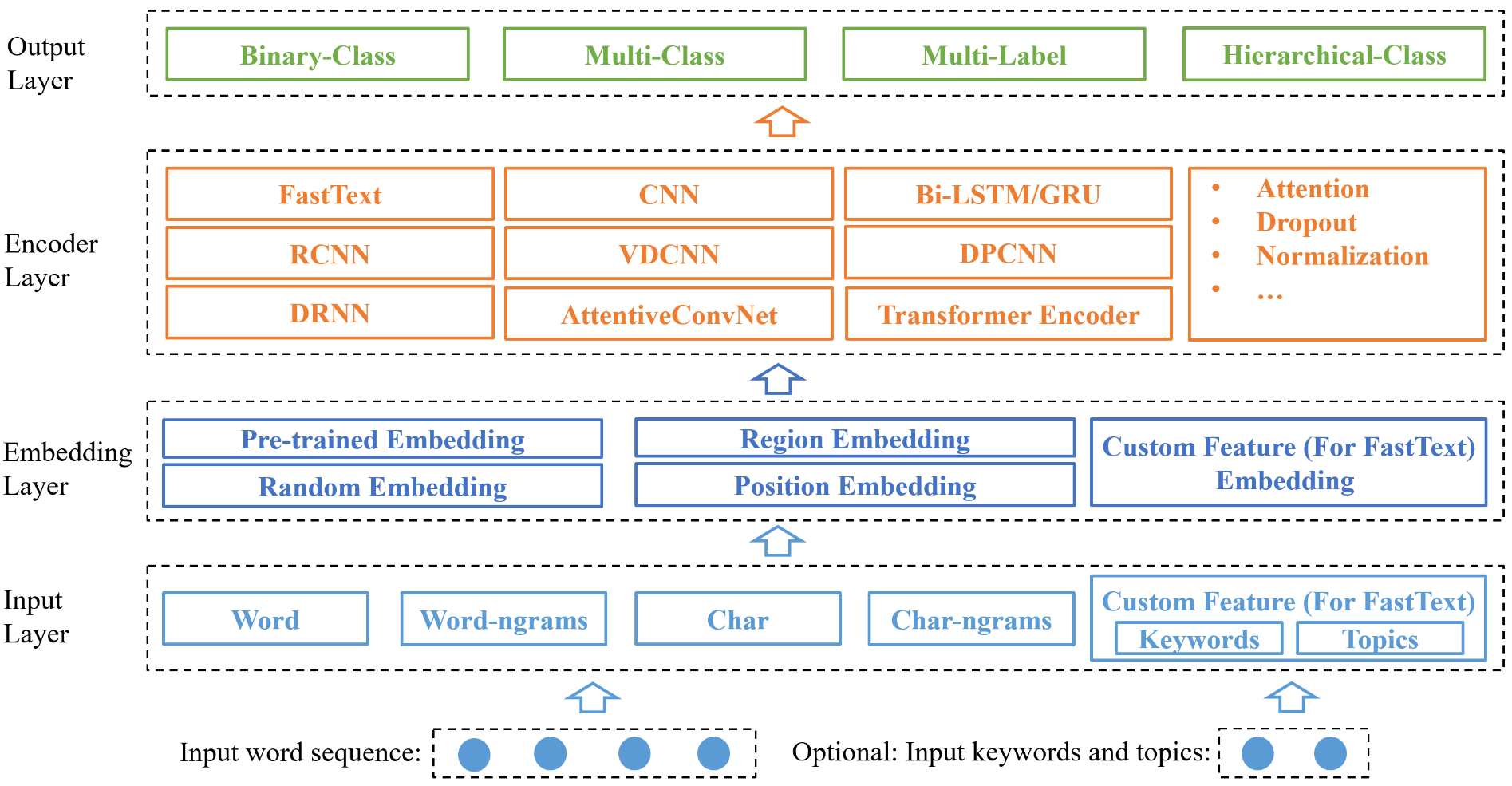

NeuralClassifier is designed for quick implementation of neural models for hierarchical multi-label classification task, which is more challenging and common in real-world scenarios. A salient feature is that NeuralClassifier currently provides a variety of text encoders, such as FastText, TextCNN, TextRNN, RCNN, VDCNN, DPCNN, DRNN, AttentiveConvNet and Transformer encoder, etc. It also supports other text classification scenarios, including binary-class and multi-class classification. It is built on PyTorch. Experiments show that models built in our toolkit achieve comparable performance with reported results in the literature.

Support tasks

- Binary-class text classifcation

- Multi-class text classification

- Multi-label text classification

- Hiearchical (multi-label) text classification (HMC)

Support text encoders

-

TextCNN (Kim, 2014)

-

RCNN (Lai et al., 2015)

-

TextRNN (Liu et al., 2016)

-

FastText (Joulin et al., 2016)

-

VDCNN (Conneau et al., 2016)

-

DPCNN (Johnson and Zhang, 2017)

-

AttentiveConvNet (Yin and Schutze, 2017)

-

DRNN (Wang, 2018)

-

Region embedding (Qiao et al., 2018)

-

Transformer encoder (Vaswani et al., 2017)

-

Star-Transformer encoder (Guo et al., 2019)

-

HMCN(Wehrmann et al.,2018)

Requirement

-

Python 3

-

PyTorch 0.4+

-

Numpy 1.14.3+

System Architecture

Usage

Training

How to train a non-hierarchical classifier

python train.py conf/train.json - set task_info.hierarchical = false.

- model_name can be

FastText、TextCNN、TextRNN、TextRCNN、DRNN、VDCNN、DPCNN、AttentiveConvNet、Transformer.How to train a hierarchical classifier using hierarchial penalty

python train.py conf/train.hierar.json

- set task_info.hierarchical = true.

- model_name can be

FastText、TextCNN、TextRNN、TextRCNN、DRNN、VDCNN、DPCNN、AttentiveConvNet、TransformerHow to train a hierarchical classifier with HMCN

python train.py conf/train.hmcn.json

- set task_info.hierarchical = false.

- set model_name =

HMCN

Detail configurations and explanations see Configuration.

The training info will be outputted in standard output and log.logger_file.

Evaluation

python eval.py conf/train.json- if eval.is_flat = false, hierarchical evaluation will be outputted.

- eval.model_dir is the model to evaluate.

- data.test_json_files is the input text file to evaluate.

The evaluation info will be outputed in eval.dir.

Prediction

python predict.py conf/train.json data/predict.json - predict.json should be of json format, while each instance has a dummy label like "其他" or any other label in label map.

- eval.model_dir is the model to predict.

- eval.top_k is the number of labels to output.

- eval.threshold is the probability threshold.

The predict info will be outputed in predict.txt.

Input Data Format

JSON example:

{

"doc_label": ["Computer--MachineLearning--DeepLearning", "Neuro--ComputationalNeuro"],

"doc_token": ["I", "love", "deep", "learning"],

"doc_keyword": ["deep learning"],

"doc_topic": ["AI", "Machine learning"]

}

"doc_keyword" and "doc_topic" are optional.Performance

0. Dataset

<table>

<tr><th>Dataset<th>Taxonomy<th>#Label<th>#Training<th>#Test

<tr><td>RCV1<td>Tree<td>103<td>23,149<td>781,265

<tr><td>Yelp<td>DAG<td>539<td>87,375<td>37,265

</table>

- RCV1: Lewis et al., 2004

- Yelp: Yelp

1. Compare with state-of-the-art

<table>

<tr><th>Text Encoders<th>Micro-F1 on RCV1<th>Micro-F1 on Yelp

<tr><td>HR-DGCNN (Peng et al., 2018)<td>0.7610<td>-

<tr><td>HMCN (Wehrmann et al., 2018)<td>0.8080<td>0.6640

<tr><td>Ours<td><strong>0.8313</strong><td><strong>0.6704</strong>

</table>

- HR-DGCNN: Peng et al., 2018

- HMCN: Wehrmann et al., 2018

2. Different text encoders

<table>

<tr><th row_span='2'>Text Encoders<th colspan='2'>RCV1<th colspan='2'>Yelp

<tr><td><th>Micro-F1<th>Macro-F1<th>Micro-F1<th>Macro-F1

<tr><td>TextCNN<td>0.7717<td>0.5246<td>0.6281<td>0.3657

<tr><td>TextRNN<td>0.8152<td>0.5458<td><strong>0.6704</strong><td>0.4059

<tr><td>RCNN<td><strong>0.8313</strong><td><strong>0.6047</strong><td>0.6569<td>0.3951

<tr><td>FastText<td>0.6887<td>0.2701 <td>0.6031<td>0.2323

<tr><td>DRNN<td>0.7846 <td>0.5147<td>0.6579<td>0.4401

<tr><td>DPCNN<td>0.8220 <td>0.5609 <td>0.5671 <td>0.2393

<tr><td>VDCNN<td>0.7263 <td>0.3860<td>0.6395<td>0.4035

<tr><td>AttentiveConvNet<td>0.7533<td>0.4373<td>0.6367<td>0.4040

<tr><td>RegionEmbedding<td>0.7780 <td>0.4888 <td>0.6601<td><strong>0.4514</strong>

<tr><td>Transformer<td>0.7603 <td>0.4274<td>0.6533<td>0.4121

<tr><td>Star-Transformer<td>0.7668 <td>0.4840<td>0.6482<td>0.3895

</table>

- performance got with 300d pretrained glove embedding

3. Hierarchical vs Flat

<table>

<tr><th row_span='2'>Text Encoders<th colspan='2'>Hierarchical<th colspan='2'>Flat

<tr><td><th>Micro-F1<th>Macro-F1<th>Micro-F1<th>Macro-F1

<tr><td>TextCNN<td>0.7717<td>0.5246<td>0.7367<td>0.4224

<tr><td>TextRNN<td>0.8152<td>0.5458<td>0.7546 <td>0.4505

<tr><td>RCNN<td><strong>0.8313</strong><td><strong>0.6047</strong><td><strong>0.7955</strong><td><strong>0.5123</strong>

<tr><td>FastText<td>0.6887<td>0.2701 <td>0.6865<td>0.2816

<tr><td>DRNN<td>0.7846 <td>0.5147<td>0.7506<td>0.4450

<tr><td>DPCNN<td>0.8220 <td>0.5609 <td>0.7423 <td>0.4261

<tr><td>VDCNN<td>0.7263 <td>0.3860<td>0.7110<td>0.3593

<tr><td>AttentiveConvNet<td>0.7533<td>0.4373<td>0.7511<td>0.4286

<tr><td>RegionEmbedding<td>0.7780 <td>0.4888 <td>0.7640<td>0.4617

<tr><td>Transformer<td>0.7603 <td>0.4274<td>0.7602<td>0.4339

<tr><td>Star-Transformer<td>0.7668 <td>0.4840<td>0.7618<td>0.4745

</table>

Acknowledgement

Some public codes are referenced by our toolkit:

- https://pytorch.org/docs/stable/

- https://github.com/jadore801120/attention-is-all-you-need-pytorch/

- https://github.com/Hsuxu/FocalLoss-PyTorch

- https://github.com/Shawn1993/cnn-text-classification-pytorch

- https://github.com/ailias/Focal-Loss-implement-on-Tensorflow/

- https://github.com/brightmart/text_classification

- https://github.com/NLPLearn/QANet

- https://github.com/huggingface/pytorch-pretrained-BERT

Update

- 2019-04-29, init version