How does LongCat-Video convert text to video?

LongCat-Video's text-to-video conversion capability is powered by its advanced AI model trained on Group Relative Policy Optimization (GRPO). The exact specifics of the text-to-video conversion process are part of the model's proprietary design, but it employs a unifying architecture that handles multiple tasks, including text-to-video conversion, within a single framework.

What is LongCat-Video's coarse-to-fine generation strategy?

LongCat-Video's coarse-to-fine generation strategy involves initial generation of a video in a lower resolution or less detail (coarse), followed by progressively increasing the detail and resolution (fine), enhancing both the temporal and spatial efficiency of video generation.

How was LongCat-Video trained?

LongCat-Video's training process involved the use of Group Relative Policy Optimization (GRPO), which incorporates multi-reward Real-life High Fidelity (RLHF), ensuring competitive performance across multiple metrics.

What is the function of the Group Relative Policy Optimization?

The Group Relative Policy Optimization used in training LongCat-Video contributes to optimizing the AI model’s predictive and generative performance. It enables the model to learn and improve from multiple rewards, strengthening its video generating and processing capabilities.

Why does LongCat-Video use Real-life High Fidelity in its training?

Real-life High Fidelity (RLHF) is used in LongCat-Video's training to ensure that the video generation and processing results it produces are of high quality and closely resemble real-world videos, enhancing the model's practical applicability and effectiveness.

How does LongCat-Video compare to other video generation models?

LongCat-Video performs competitively across multiple metrics when compared to leading open-source and commercial video generation models. However, the specifics of this comparison are dependent on individual performance metrics and may vary based on the requirements and context.

What is the LongCat-Video-Avatar feature?

LongCat-Video-Avatar is a feature launched in the AI model that enables the creation of expressive, audio-driven character animation. It enhances LongCat-Video's capabilities by adding an dynamic animation aspect to its video generation tasks.

What tasks can be handled natively by the LongCat-Video-Avatar feature?

The LongCat-Video-Avatar feature can natively handle tasks such as Audio-Text-to-Video conversion, Audio-Text-Image-to-Video generation, and Video Continuation.

What types of audio inputs does LongCat-Video support?

LongCat-Video supports both single-stream and multi-stream audio inputs, offering seamless compatibility for various audio input formats.

Where can I find technical reports related to LongCat-Video?

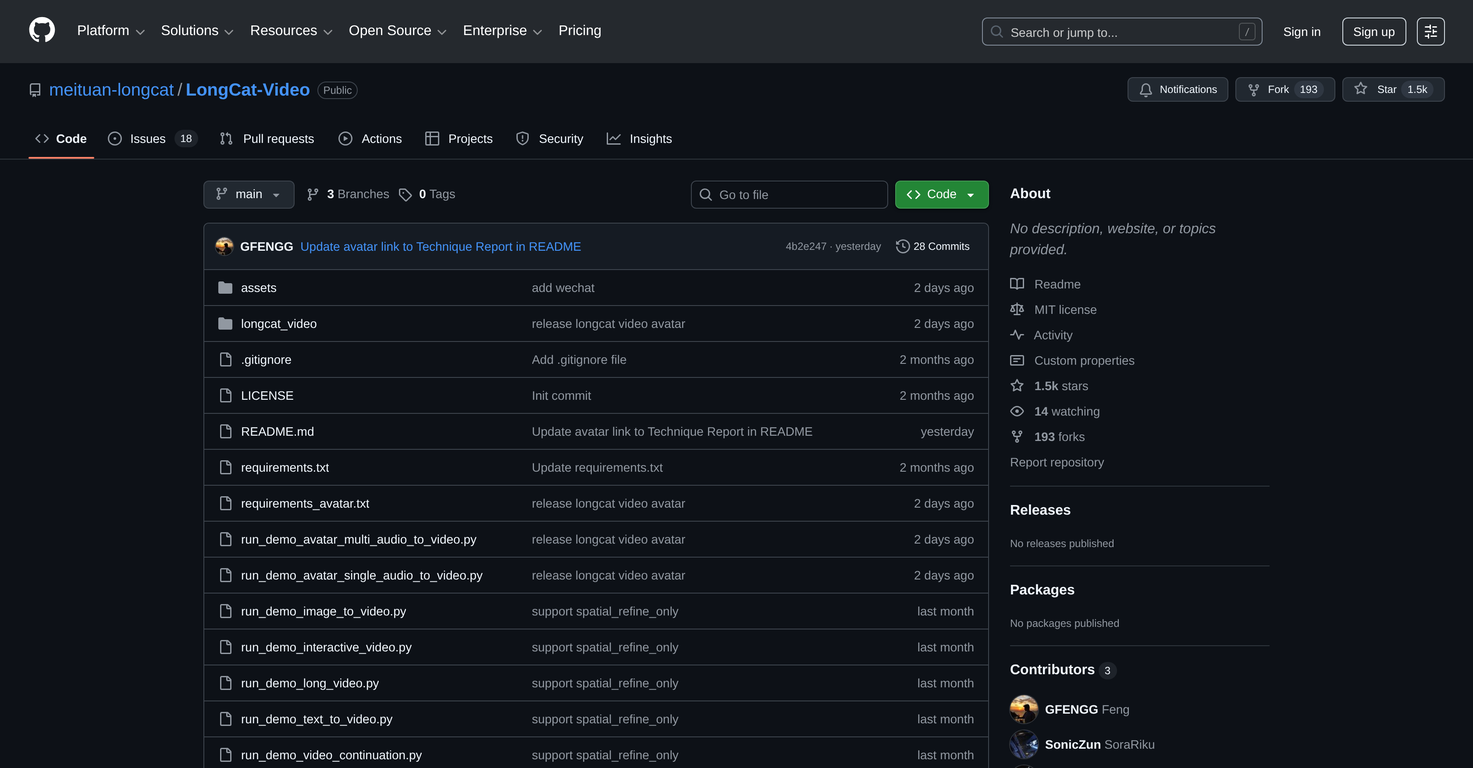

Technical reports related to LongCat-Video are openly available on GitHub. These include inference code, model weights, and project pages alongside the technical reports.

How can I get the model weights for LongCat-Video?

The model weights for LongCat-Video can be found on their GitHub project page. They provide detailed information about how the AI model was trained, the algorithms it uses, and its overall architecture and design.

What platforms support LongCat-Video?

LongCat-Video, developed by meituan-longcat, is supported on GitHub. It's an open-source project and its resources including model weights and code are available for use and contribution by the public.

How does LongCat-Video handle high resolution videos?

LongCat-Video handles high resolution videos effectively, especially at 720p and 30 fps. It utilises a coarse-to-fine generation strategy for efficiency, and employs Block Sparse Attention to enhance performance at high resolutions.

What is the advantage of using LongCat-Video?

The advantage of using LongCat-Video lies in its multifaceted capabilities, which include converting text to videos, translating images to video sequences, and generating video continuation. It's also efficient in creating high-resolution long videos. Another benefit is its open availability on GitHub, which allows developers to freely access and benefit from the existing codebase, model weights, and associated technical documentation.

Will LongCat-Video be updated or improved in the future?

Updates or improvements to LongCat-Video would depend on its developers. While the current stated feature set is comprehensive, advancements in AI and video technology could prompt future updates. Any such changes would be reflected in their GitHub repository.

Is the code of LongCat-Video available to the public?

Yes, LongCat-Video's code, along with its technical reports, inference code, model weights, and project pages, are openly available on GitHub, allowing anyone to access, learn from, and contribute to it.

kanawati🙏 1,140 karmaMar 26, 2025@freebeat AIThe concept is great.

kanawati🙏 1,140 karmaMar 26, 2025@freebeat AIThe concept is great.