What is Paragraphica?

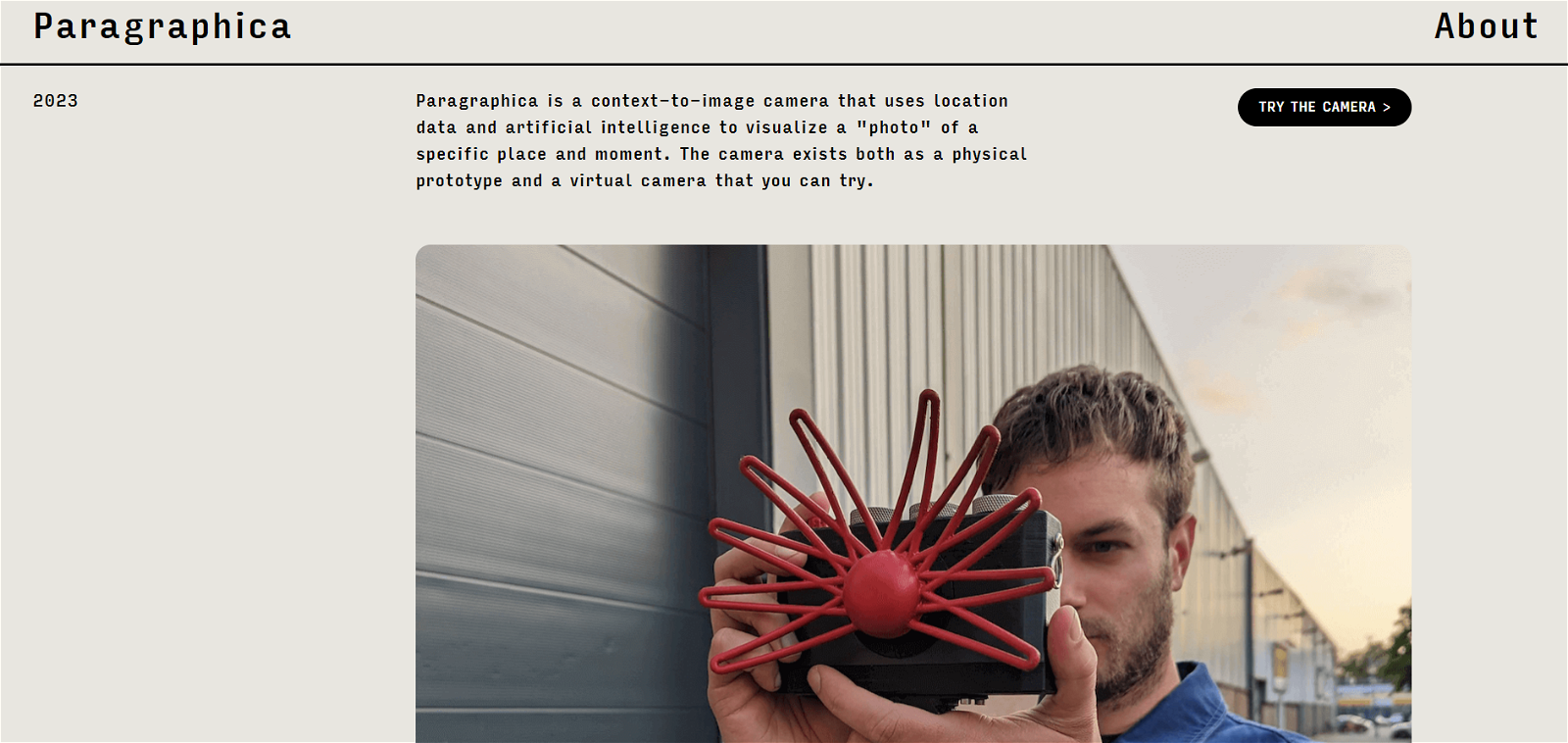

Paragraphica is a context-to-image camera that utilizes artificial intelligence and location data to transpose a unique moment and place into a photo-realistic image. It's not just a traditional camera, as it allows for deeper insights into the essence of a moment by offering a different kind of perceptual experience.

How does Paragraphica use artificial intelligence?

Paragraphica uses artificial intelligence to transcribe location data and a textual description of a moment and place into a photo. This AI-driven technology interprets the set parameters, amalgamating inputs like address, weather conditions, time of day, and nearby landmarks into a created contextual 'photo'.

Can I use Paragraphica as a physical or virtual camera?

Yes, Paragraphica can be utilized both as a physical prototype and a virtual camera. Notably, the virtual camera is subject to high traffic and may not always be available.

What information does Paragraphica need to capture a photo?

To capture a 'photo', Paragraphica gathers data including the current location's address, prevailing weather conditions, the time, and relevant nearby places.

How can I control the data and AI parameters in Paragraphica?

Paragraphica offers three physical dials, allowing users to modulate data and AI parameters. These parameters include the radius, or scope, of the area searched for data, the noise seed for the AI image diffusion process, and the level of detail the AI should use to follow the provided location description.

What is Paragraphica's text-to-image AI technology?

Paragraphica's text-to-image AI technology is a key feature that transposes a narrative detailing a specific moment and location into a photo-realistic image. This innovative technology transforms the written description from the user into a rich and nuanced visual image.

Why do the photos from Paragraphica not look exactly like the location captured?

Paragraphica produces images that don't necessarily mirror the captured location. This happens because the AI interprets the location from its own model's perspective, creatively constructing a 'photo' that echoes the sentiment of the place rather than duplicating its actual visual representation.

Does Paragraphica only work with visual perception?

No, Paragraphica is not limited to only visual perception. It uses rich location data and AI image synthesis to deliver deeper insights into the moment, extending the perceptual experience beyond a human-like visual interpretation.

How does Paragraphica use location data and AI image synthesis?

Paragraphica uses location data and AI image synthesis to capture a specific environment's essence at a given moment. It collects data using open APIs— assimilating the address, weather, time of day, and nearby points of interest. The AI then transforms this information into a 'photo'.

What are the software and hardware components of Paragraphica?

The software components of Paragraphica include Noodl, Python code, and Stable Diffusion API. Physically, Paragraphica is engineered with a Raspberry Pi 4, a 15-inch touchscreen, 3D printed housing, and some custom electronics.

What happens when I press the trigger on Paragraphica?

When the trigger on Paragraphica is pressed, a real-time description of the location is displayed. The camera then synthesizes this information into a scintigraphic representation of the description or 'photo'.

How do the three physical dials on Paragraphica influence the photo's appearance?

Paragraphica's three physical dials modify the photo's appearance by permitting users to control data inputs and AI parameters. Each dial configures different elements such as the radius of the area, the noise seed for image diffusion, and the guidance scale for the AI to follow the place description.

How does Paragraphica utilize the address, weather, time of day, and nearby places in photos?

Paragraphica uses address, weather conditions, time of day, and nearby places to capture the essence of a photoshoot. It collects this data through open APIs and uses it to compose a narrative that becomes the basis for the generated 'photo'.

Why are the pictures from Paragraphica called 'photos'?

The images produced by Paragraphica are referred to as 'photos’ because they aren't just snapshots; rather, they are a nuanced reflection of the location at a specific moment. The AI model 'sees' and interprets the location, producing a visual representation that contains emotive resonance and contextual meaning.

Can I see the world from other intelligences' perspectives using Paragraphica?

Yes, by using Paragraphica, users can perceive the world from the perspective of artificial intelligence. This sort of experience engenders a greater understanding and a different perception of the world around us.

How does the radius dial on Paragraphica function?

The radius dial on Paragraphica acts similarly to a focal length in an optical lens. It controls the scope of the area that the camera searches for location information and data.

What does the second dial on Paragraphica do?

The second dial on Paragraphica produces a noise seed for the AI image diffusion process, akin to the grain in a film camera.

How can I use the guidance scale on Paragraphica?

The guidance scale on Paragraphica is controlled through the third dial. This parameter determines how closely the AI follows the provided location description. A higher value leads to a 'sharper' photo, while a lower value results in a 'blurrier' image.

What does Paragraphica offer beyond a traditional camera?

Beyond a traditional camera, Paragraphica offers a novel way of capturing and interpreting the world by using artificial intelligence, location data, and textual parameters to visualize a specific moment and place. Its ability to analyze and embody context-specific parameters results in an image that's more than just a visual snapshot.

Why is Noodl used in building the web app of Paragraphica?

Noodl is used in building the web app of Paragraphica because it aids in establishing an interface that communicates between the camera and the various API endpoints. This integration helps in generating the location-based prompt and the image itself.