Twinny

Overview

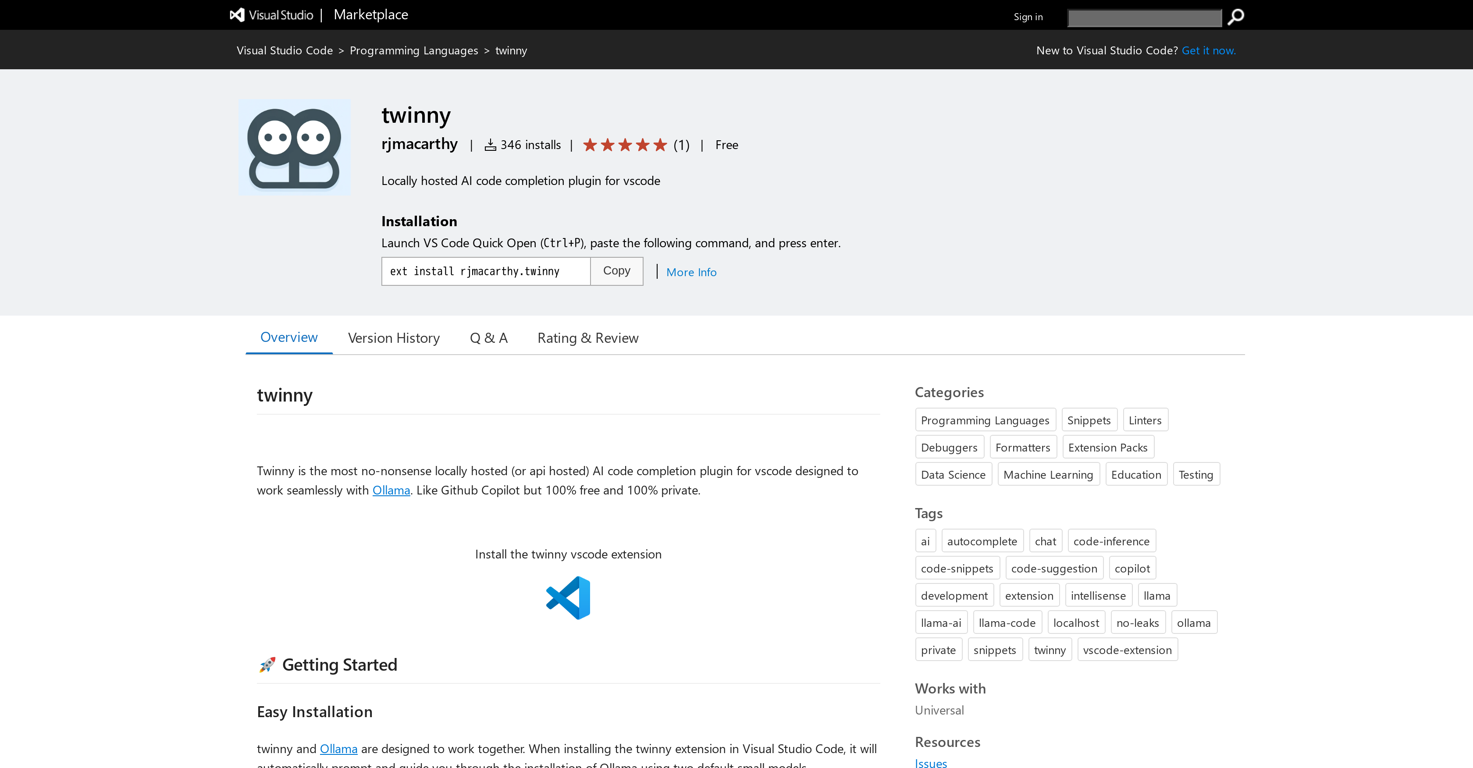

Twinny is an AI code completion extension for Visual Studio Code. It operates locally, ensuring your code remains private, and is designed to enhance code completion tasks with seamless integration with Ollama.

This straightforward plugin is comparable to Github Copilot, but the difference lies in its cost-effectiveness and privacy. It comes at no charge and ensures total user confidentiality.

Once twinny and Ollama are installed in Visual Studio Code, they work together to provide users with an interactive coding assistant that suggests code in real time.

Users will be able to tell the system is operational through an icon at the bottom of the editor, showing what models are running. Twinny supports multiple programming languages, offering features like configurable endpoint and port for Ollama API, auto code completion, chat feature like Copilot Chat, viewing differences for code completions, and accepting solutions directly to the editor.

Your chat history with twinny is preserved per conversation, allowing for easy reference. The extension is easy to install, ensures fast and accurate code completions, and is freely available.

Releases

Top alternatives

-

Johnson🙏 122 karmaJul 16, 2025@OnSpace.AI - No Code App BuilderWould rate 4.9 if possible, but rounding up to 5 stars because this app truly excels compared to other AI coding tools. Why 5 Stars: Best-in-class AI coding assistance Huge improvements over competitors Actually works for real development Real Impact: I successfully built and published an actual app using this tool - that's game-changing for non-developers like me. Bottom Line: Yes, there's room for improvement, but this is already the top AI coding app available. The fact that ordinary people can create real apps with it says everything. Perfect for anyone wanting to turn ideas into actual apps!

Johnson🙏 122 karmaJul 16, 2025@OnSpace.AI - No Code App BuilderWould rate 4.9 if possible, but rounding up to 5 stars because this app truly excels compared to other AI coding tools. Why 5 Stars: Best-in-class AI coding assistance Huge improvements over competitors Actually works for real development Real Impact: I successfully built and published an actual app using this tool - that's game-changing for non-developers like me. Bottom Line: Yes, there's room for improvement, but this is already the top AI coding app available. The fact that ordinary people can create real apps with it says everything. Perfect for anyone wanting to turn ideas into actual apps! -

Cursor — v2Much stronger coding performance, with big benchmark gains over Composer 1.5 across CursorBench, Terminal-Bench 2.0, and SWE-bench Multilingual. Better long-horizon task solving, aimed at coding jobs that require hundreds of actions instead of shorter edit loops. Stronger base model training from Cursor’s first continued pretraining run, giving reinforcement learning a better foundation than before. Better price-to-intelligence balance, positioned as a much stronger model without giving up cost efficiency. Adds a faster same-intelligence variant and makes fast mode the default option.

-

>Why is this website so ugly? Our goal is to rapidly make the software better, not to have a shiny website. Love it!

-

CodingFleet has evolved a lot over the past year. We added the latest flagship coding models including GPT-5.4, Grok-4.20 Beta, Gemini 3.1 Pro, Claude Sonnet 4.6 / Opus 4.6, Qwen 3.5, MiniMax M2.5, and newer Codex reasoning models. The platform also gained major new capabilities like Image Generation, Diagram Generator, Diagram-to-Code, vision support, improved Web Access, and MCP integrations. For builders, Code Execution became much more powerful with better sandboxes, filesystem tools, shell/database access, and improved long-running workflows. We also significantly improved the product experience with larger context limits, broader file upload support, Chat Memory, cross-tab/live chats, a much better dashboard, and detailed usage statistics. On the business side, we refreshed pricing with a stronger Pro plan, cheaper credit purchases, and permanent Pro access.

-

Code varies from run to run. Still it is a helpful app. You can specify coding languages that are not in the dropdown menu.

-