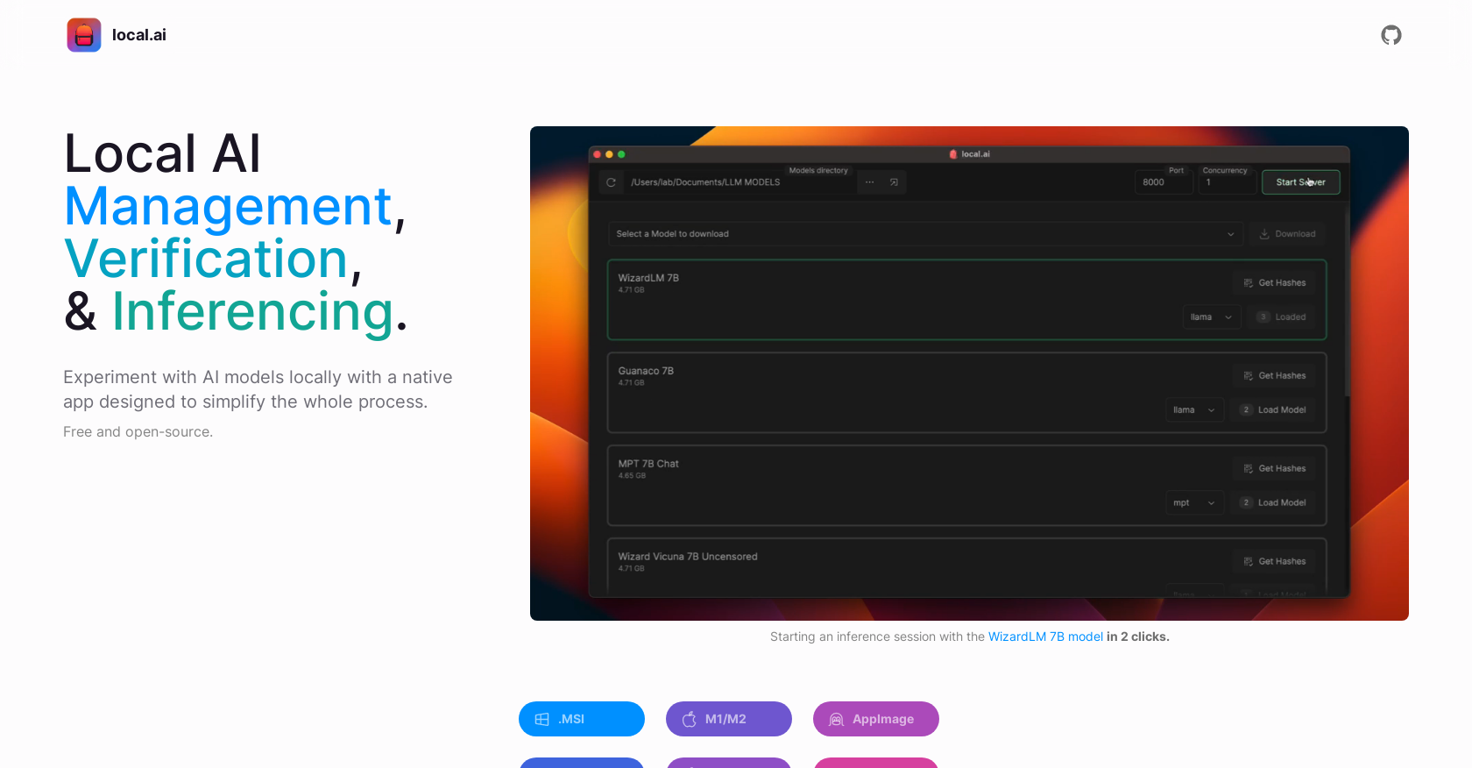

What are the main features of Localai?

Localai offers several key features: CPU inferencing which adapts to available threads, GGML quantization with options for q4, 5.1, 8, and f16, model management with resumable and concurrent downloading and usage-based sorting, digest verification using BLAKE3 and SHA256 algorithms with a known-good model API, license and usage chips, and a quick check using BLAKE3, and an inferencing server feature for AI inferencing with quick inference UI, write support to .mdx files, and options for inference parameters and remote vocabulary.

What platforms is Localai compatible with?

Localai is compatible with Mac M2, Windows, and Linux platforms.

How can I install the Localai on my system?

You can install Localai on your system by downloading the MSI for Windows, the .dmg file for Mac (both M1/M2 and Intel architectures), and either the AppImage or .deb file for Linux from the Localai Github page.

What is the size of the Localai on my Windows/Mac/Linux device?

The size of Localai on your Windows, Mac or Linux device is less than 10MB.

What is the function of the inferencing server feature?

The inferencing server feature of Localai allows users to start a local streaming server for AI inferencing, making it easier to perform AI experiments and gather the results.

How do I start a local streaming server for AI inferencing using Localai?

You can start a local streaming server for AI inferencing using Localai by loading a model and then starting the server, a process which requires only two clicks.

Can I perform AI experiments with Localai without owning a GPU?

Yes, Localai allows users to perform AI experiments locally without the need for a GPU.

Does the Localai tool support GGML quantization?

Yes, Localai supports GGML quantization with options for q4, 5.1, 8, and f16.

How does Localai manage AI models?

Localai provides a centralized location for users to keep track of their AI models. It offers features for resumable and concurrent model downloading, usage-based sorting and is directory structure agnostic.

What steps does Localai take to ensure the integrity of downloaded models?

To ensure the integrity of downloaded models, Localai offers a robust digest verification feature using BLAKE3 and SHA256 algorithms. This encompasses digest computation, a known-good model API, license and usage chips, and a quick check using BLAKE3.

Do I have to pay to use the Localai?

No, the use of Localai is completely free.

Does Localai offer a GPU inferencing feature?

Currently, Localai offers CPU inferencing, although GPU inferencing is listed as an upcoming feature.

What options does the Localai offer for inference parameters and remote vocabulary?

Localai offers a quick inference UI, supports writing to .mdx files, and includes options for inference parameters and remote vocabulary.

Does Localai require technical setup for local AI experimentation?

No, Localai does not require any technical setup for local AI experimentation. It offers a user-friendly and efficient environment for the same.

Can I keep track of my AI models using Localai?

Yes, Localai allows users to keep track of their AI models in a centralized location.

How does Localai verify the downloaded models?

Localai verifies downloaded models by using a robust digest verification feature that employs BLAKE3 and SHA256 algorithms. This includes digest computation, a known-good model API, license and usage chips, and a quick check using BLAKE3.

Does the Localai support concurrent model downloading?

Yes, Localai does support concurrent model downloading.

What usage-based sorting options does Localai offer?

Localai offers usage-based sorting, which allows users to organize their models based on how often they use them.

How memory-efficient is Localai?

Localai is memory-efficient due to its Rust backend, which makes it compact and low in resource requirements.

Is Localai open-source and where can I get the source code?

Yes, Localai is open-source, and the source code can be obtained from the Github page.