What are NVIDIA NIM APIs and how can they be used?

NVIDIA NIM APIs are tools that enable users to leverage NIM's functionalities and resources to build AI applications. They allow users to implement leading AI models, ensure secure agent execution, restrict data access for user data protection, and launch GPU instances for faster and more efficient AI model training and execution.

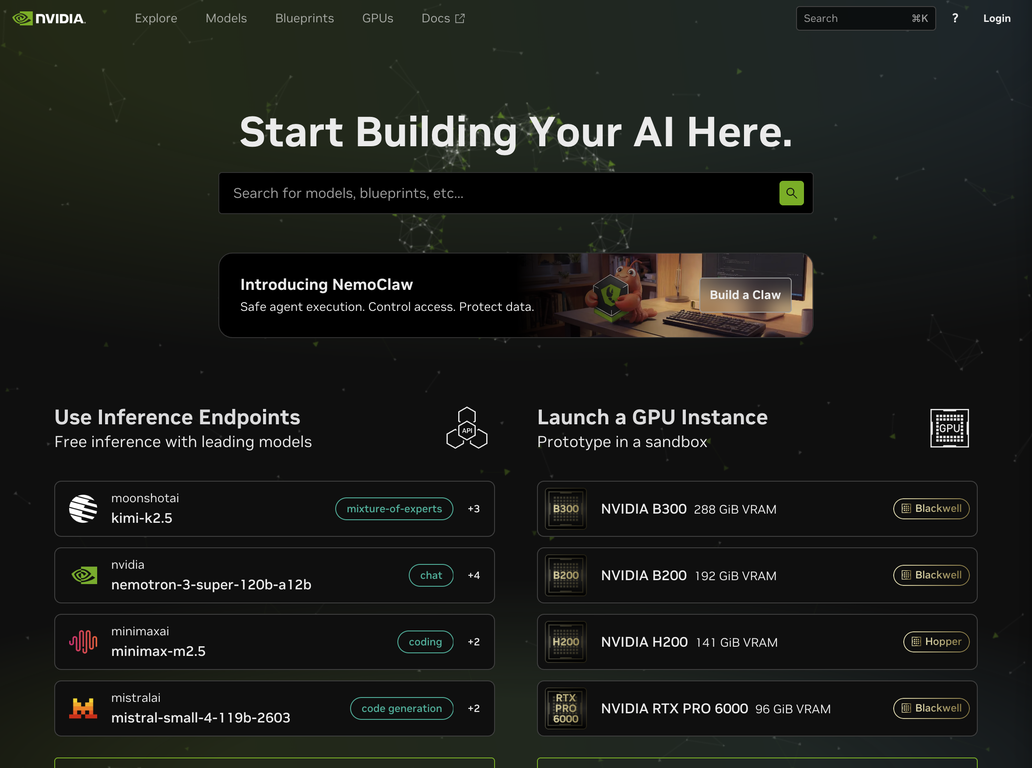

What types of GPU models does NVIDIA NIM offer?

NVIDIA NIM offers a range of GPU models to meet different user needs. This includes NVIDIA B300 with 288 GiB VRAM, NVIDIA B200 with 192 GiB VRAM, NVIDIA H200 with 141 GiB VRAM, and the compact NVIDIA RTX PRO 6000 with 96 GiB VRAM.

How does NVIDIA NIM assist with AI prototyping?

NVIDIA NIM facilitates AI prototyping by offering a sandbox environment. In this dedicated space, users can experiment, test theories, and fine-tune their AI models without affecting their production environment or real-world data.

What resources does NVIDIA NIM provide for building AI applications from scratch?

For building AI applications from scratch, NVIDIA NIM provides model blueprints, workflows, and sample codes. These resources, along with the platform's multitude of functionalities including GPU instances and inference endpoints, help users craft their AI platforms effectively.

Can you elaborate on the workflows and sample codes offered by NVIDIA NIM?

Workflows and sample codes offered by NVIDIA NIM guide users through the process of building AI applications. Workflows provide a series of tasks or operations designed to achieve a particular result, while sample codes serve as pre-written software or script examples that users can modify to fit their specific needs.

How can the inference endpoints in NVIDIA NIM be utilized?

The inference endpoints in NVIDIA NIM are tools integrated with leading AI models. They allow users to predict outcomes or derive insights based on provided data thereby assisting with effective decision making and strategy formulation.

What is the role of predictive modeling in NVIDIA NIM?

Predictive modeling in NVIDIA NIM involves using AI models to predict outcomes based on provided data. Through the platform's inference endpoints, users can input data and the model will output a prediction, assisting in decision making and future planning.

What data protection measures does NVIDIA NIM have in place?

NVIDIA NIM has several data protection measures in place, such as secure agent execution through NemoClaw that reduces the risks associated with agent execution, and restrictive data access functionality that ensures user data protection.

Can you describe the restrictive data access feature of NVIDIA NIM?

The restrictive data access feature of NVIDIA NIM allows users to secure and control access to their data, ensuring its protection. The specifics, however, of how this feature operates isn't detailed on their website.

How can I launch GPU instances using NVIDIA NIM?

You can launch GPU instances using NVIDIA NIM to enhance the speed and efficiency of AI model training and execution. While the exact steps for launching these instances aren't provided on their website, it's clear that this feature boosts the performance, agility, and response times of AI applications.

Can you explain the model blueprints provided by NVIDIA NIM?

Model blueprints provided by NVIDIA NIM are frameworks or templates that guide users in building AI models. They provide a structured approach to model creation, offering crucial guidance with respect to various parameters and design elements.

Does NVIDIA NIM offer any guides for setting up NemoClaw?

Yes, NVIDIA NIM offers guides for setting up NemoClaw. While the site doesn't detail the content of these guides, they likely explain the process of setting up, configuring, and deploying NemoClaw, your personal AI agent within the NIM environment.

What is the VRAM capacity of the B300 GPU model in NVIDIA NIM?

The VRAM capacity of the B300 GPU model in NVIDIA NIM is 288 GiB. These sizable resources make it suitable for heavy-duty AI tasks.

What are the specifications of the RTX PRO 6000 GPU model in NVIDIA NIM?

The RTX PRO 6000, offered in NVIDIA NIM, has 96 GiB VRAM. Further specifications haven't been detailed on their website, but this GPU model is positioned as a compact yet robust choice for various AI application needs.

Does NVIDIA NIM provide technical documentation?

Yes, NVIDIA NIM does provide technical documentation. It offers guides and resources that cover key processes such as setting up NemoClaw, launching GPU instances, and using workflows and sample codes to build AI applications.

How can I start building my AI with NVIDIA NIM?

You can start building your AI with NVIDIA NIM by engaging with their resources such as model blueprints, workflows, and sample codes. Launching GPU instances, prototyping in the sandbox environment, and deploying leading models through the APIs are some of the activities you'll likely engage in as you kickstart your AI project.

What type of applications can I develop with NVIDIA NIM?

With NVIDIA NIM, you can develop enterprise-grade generative AI applications. Its suite of resources and functionalities, including leading AI models, secure agent execution, GPU instances, and prediction capabilities, allows users to create a variety of AI applications tailored to their respective enterprise needs.