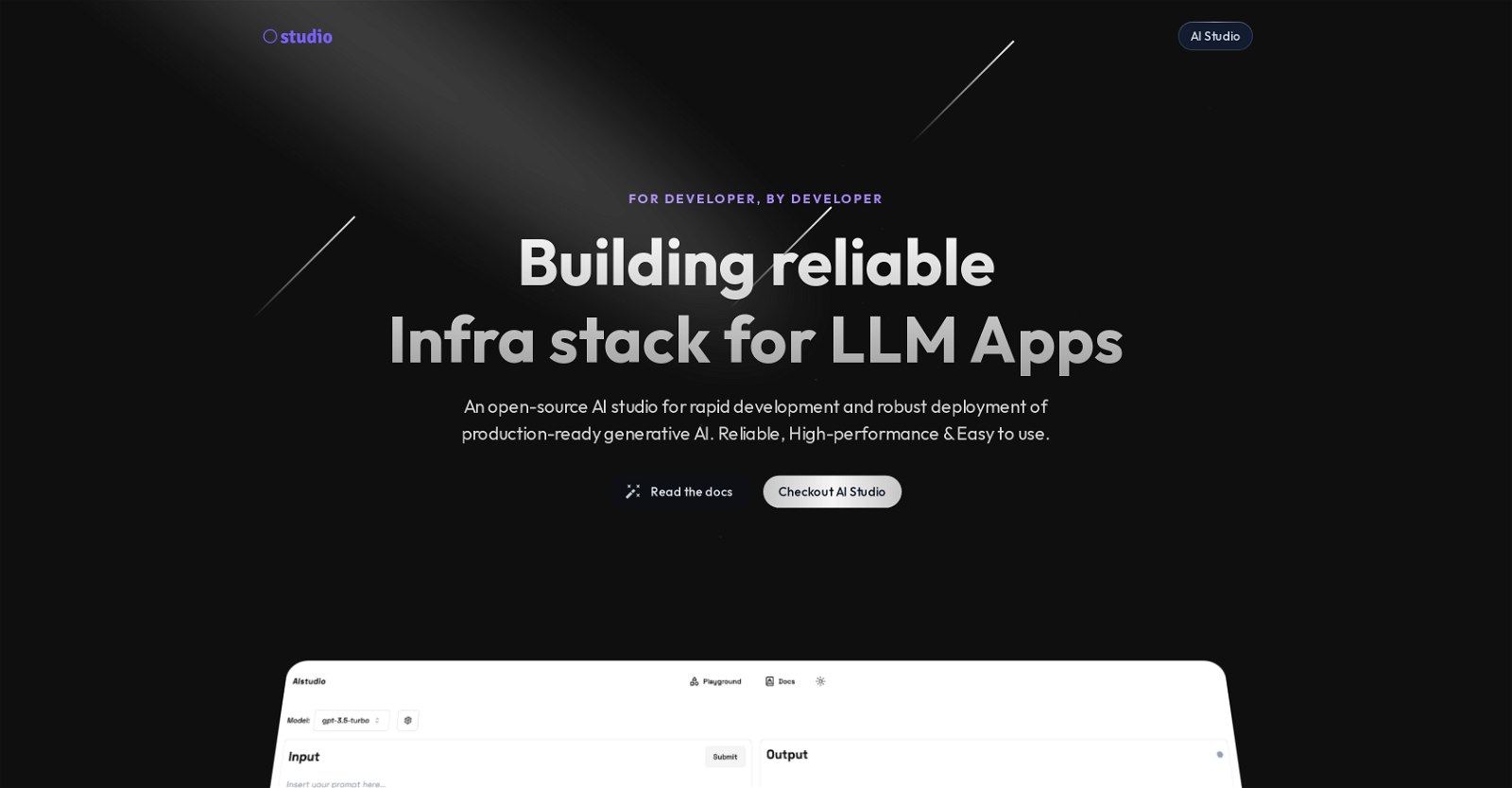

What is Missing Studio?

Missing Studio is an open-source AI studio that provides developers with the required infrastructure for rapidly developing and robustly deploying generative AI applications that are ready for production use.

What makes Missing Studio different from other AI studios?

Missing Studio differs from other AI studios by providing an infrastructure that emphasizes on high-performance and simple usability. With features like Universal API (AI Router), API management, load balancing, automatic retries and 'Semantic Caching', it offers a comprehensive package for generative AI development and deployment.

How does Missing Studio's AI Router work?

Missing Studio's AI Router is a Universal API that eliminates the need for managing multiple APIs from different Language Models (LLMs). It serves as a single point of access for various LLMs, enabling seamless integration with multiple providers such as OpenAI, Anthropic, Cohere, and more.

What is the purpose of the Universal API in Missing Studio?

The Universal API in Missing Studio serves the purpose of improving reliability and eliminating the need to learn and manage multiple APIs for different LLM providers. It allows for efficient distribution of incoming requests among various models, automatic fallback to alternative models to improve reliability, and repeated attempts to get the request to succeed.

What are some features of Missing Studio's API management?

Missing Studio's API management includes load balancing features distributing incoming requests efficiently among models, the capability of Exponential Retries, Automatic Fallback to switch to alternative models, and the ability to seamlessly switch between multiple LLM providers.

How does Missing Studio handle load balancing?

Missing Studio handles load balancing by distributing incoming requests efficiently across models. This enables optimal usage of resources and ensures no single model is overloaded with the influx of requests.

What is the process of automatic fallback in Missing Studio?

In Missing Studio, the process of automatic fallback involves switching to alternative models in the case the primary model fails. This enhances reliability and ensures the continuity of service.

What is the role of 'Semantic Caching' in Missing Studio?

'Semantic Caching' in Missing Studio refers to an efficient caching mechanism that reduces cost and latency. This enhances overall performance and can contribute to significant cost savings.

What is the function of Missing Studio's AI gateway?

The AI gateway in Missing Studio provides visibility, control, and insights about API application usage. It logs all requests for monitoring and debugging processes and helps in understanding user, request, and model costs.

How does Missing Studio aid in the monitoring and debugging process?

Missing Studio aids in the monitoring and debugging process by logging all requests and tracing the journey of each request. It helps in understanding the performance and cost associated with users, requests, and models. Also, it provides insights needed to make data-driven decisions.

What is the purpose of API key management in Missing Studio?

API key management in Missing Studio serves the purpose of safeguarding your primary credentials. It makes it easy to revoke or renew them for better access control.

How does Missing Studio provide safeguards for my API credentials?

Missing Studio provides safeguards for your API credentials by providing API key management functionality where primary credentials can be easily renewed or revoked, thus providing better access control.

How can I use Missing Studio's playground for experimenting with LLMs?

Missing Studio provides a playground for users to experiment with LLMs. It provides a user-friendly environment for understanding and making decisions for production readiness. You can play with and explore different LLMs in the playground to choose the best fit for your production needs.

What are some of the LLM providers that Missing Studio integrates with?

Missing Studio integrates with numerous LLM providers such as OpenAI, Anthropic, Cohere, and more. Through its Universal API, it offers seamless integration with these providers.

How does Missing Studio help in understanding user, request and model costs?

Missing Studio helps in understanding user, request, and model costs by monitoring usage, tracking all LLM requests, and providing insights about API application usage. This helps developers to optimize costs and performance.

How does Missing Studio enhance the reliability and performance of generative AI applications?

Missing Studio enhances the reliability and performance of generative AI applications by providing a robust infrastructure, Universal API, load balancing, automatic fallback, and 'Semantic Caching'. It ensures high performance and reliability in developing and deploying such applications.

How can Missing Studio help me in API application optimization?

Missing Studio aids in API application optimization by keeping track of all requests for monitoring and debugging. It helps in understanding the journey of each request, analyzing usage, request token, cache, etc. It also offers features like remote cache, rate limits, and auto retries to optimize performance.

What does the exponential retries feature in Missing Studio do?

The functionality of the exponential retries feature in Missing Studio is to repeat the attempts to get the request to succeed with an exponential backoff system. In case of a failed request, Missing Studio tries to reprocess the request after increasingly longer delays, improving the chances of request completion.

How does Missing Studio aid in managing multiple APIs?

Missing Studio aids in managing multiple APIs by providing a Universal API, dubbed as the AI Router. It eliminates the need for managing APIs from different LLM providers, improving reliability, and significantly reducing complexity.

What are some best practices for working with Missing Studio to develop and deploy generative AI applications?

Some best practices for working with Missing Studio to develop and deploy generative AI applications include utilizing the Universal API for seamless integration with multiple LLM providers, leveraging 'Semantic Caching' for reduced cost and latency, using load balancing for efficient request distribution, implementing automatic fallback for increased reliability, and actively monitoring Requests Tracing and Usage Monitoring for optimization.

Build your next AI startup 100x faster with StartKit.AI boilerplate code.★★★★★★★★★★331

Build your next AI startup 100x faster with StartKit.AI boilerplate code.★★★★★★★★★★331