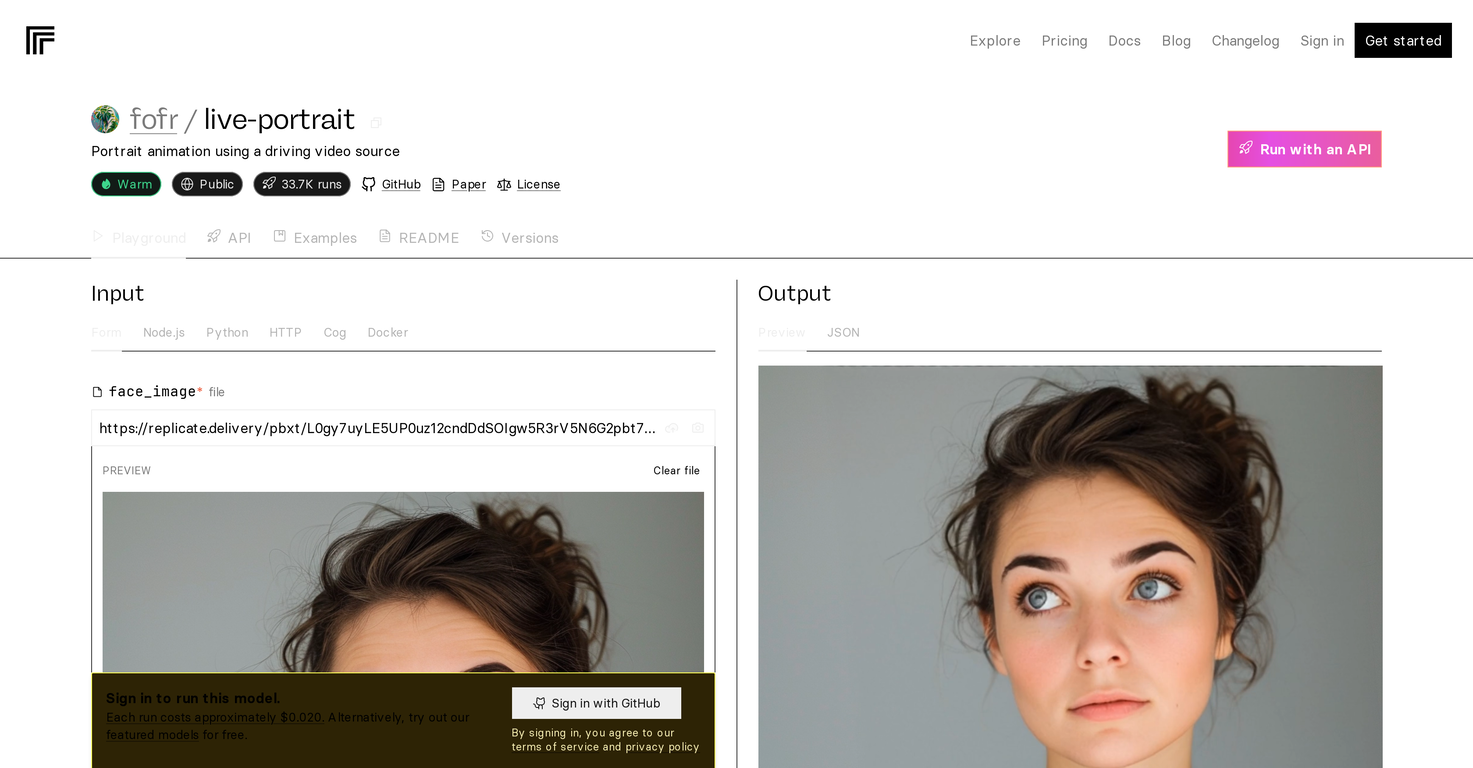

How does LivePortrait use a driving video source to animate portraits?

LivePortrait leverages a driving video source to animate portraits. It uses the motions and expressions in the source video to animate the given portrait. Although the specific methodology isn't detailed, this generally involves feature extraction, mapping, and synthesis.

Is it possible to run the LivePortrait model on my local system?

Yes, thanks to its open-source provision, you could run the LivePortrait model on your local system. Particularly, the Docker option they provide can assist in this.

How do input variations affect the prediction times of the LivePortrait?

The prediction times of LivePortrait vary based on the inputs. While specific details are not mentioned, factors such as image complexity or resolution could potentially impact prediction times.

Why is the usage of LivePortrait in commercial applications restricted?

The reason commercial use of LivePortrait is restricted is because the model uses InsightFace buffalo_l models, which have their own usage limitations.

Under what license are the LivePortrait code and weights released?

The LivePortrait code and weights are released under the MIT license.

What is the ComfyUI custom node and how does LivePortrait utilize it?

The ComfyUI custom node is a component used by the LivePortrait model, created by Kijai. However, precise details of how the model utilises this node are not mentioned.

Who converted the safetensor weights the model uses and why?

The safetensor weights that the model uses were converted by Kijai, though the specific reasons for this conversion are not indicated.

What is the role of the safetensor weights in the LivePortrait's performance?

While the exact role of safetensor weights in LivePortrait's performance isn't expressly stated, they are most likely integral to the operations of the model and its ability to generate animated portraits.

What kind of support is provided to help users understand how to use the LivePortrait model?

Considerable support is provided to help users understand how to use the LivePortrait model, in the form of documentation and available examples.

What are some popular applications of portrait animation with LivePortrait?

While a variety of applications for LivePortrait are possible, the key examples mentioned are digital art creation and virtual avatar design.

What are the requirements to run the fofr/live-portrait model with an API on Replicate?

The requirements to run the LivePortrait model with an API on Replicate are not specified. However, being open-source and having Docker integration, the model may be deployed on various systems as long as they meet basic hardware and software requirements.

What is the cost of running the LivePortrait model on Replicate?

Running the LivePortrait model on Replicate costs approximately $0.020 per run or 50 runs per $1. However, the actual cost may fluctuate depending on your inputs.

Can I use LivePortrait for avatar animation?

Yes, one of the applications of LivePortrait is for avatar animation, bringing static portrait images to life based on a driving video source.

Is this AI model related to any academic or scientific work?

LivePortrait is indeed related to academic work, being based on a paper titled 'Efficient Portrait Animation with Stitching and Retargeting Control'.

How can Docker be used to run this AI model on a personal computer?

The specifics of using Docker to run the LivePortrait model on a personal computer is unclear. However, as the model is open-source and runs with Docker, it should be possible to use Docker for running the model locally as long as the local system satisfies necessary requirements.

What restrictions are there on the commercial use of this AI model?

The commercial use of LivePortrait is restricted due to its use of InsightFace buffalo_l models. The complete restrictions or terms are not mentioned in the given content but usage restrictions are generally guided by the modeling and licensing terms of these specific models.

How would you rate Live-Portrait?

Help other people by letting them know if this AI was useful.