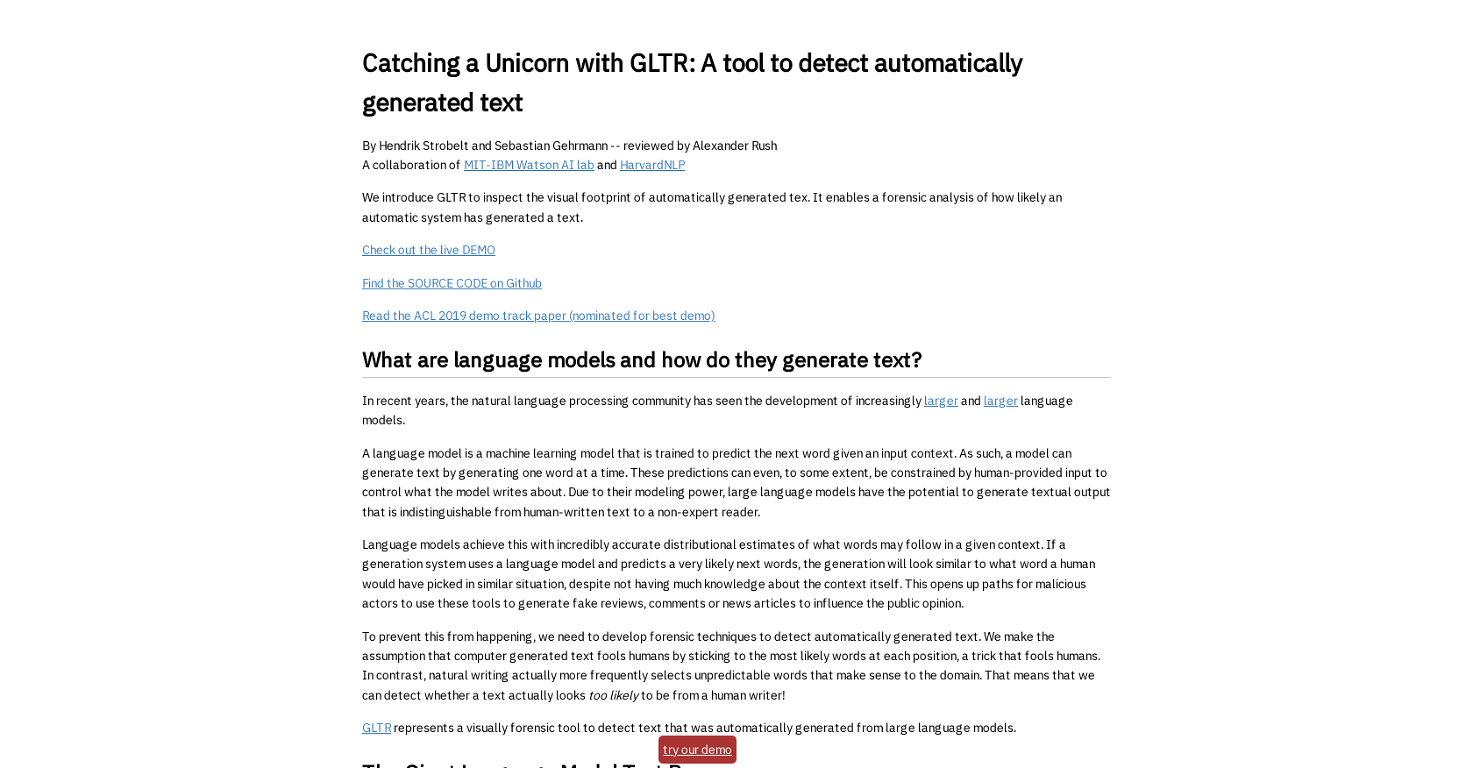

What exactly is GLTR?

GLTR is a tool developed by the MIT-IBM Watson AI lab and HarvardNLP. It's primary function is to detect automatically generated text via forensic analysis. It achieves this by analyzing how likely a language model such as the GPT-2 117M from OpenAI has generated the text.

How does GLTR detect artificially generated text?

GLTR detects artificially generated text by analysing the likelihood of a language model generating individual words in the text. The tool ranks the words according to how likely they are to be produced by the model and highlights the most likely words in different colours.

What is the significance of the color-coded words in GLTR's analysis?

GLTR uses a color-coded system to indicate the likelihood of a word being generated by a machine. The most likely words are highlighted in green, followed by yellow, then red for less likely words. Any words that were not likely to be generated by an AI model are marked in purple.

What are the three histograms provided by GLTR and what do they represent?

GLTR provides three histograms which aggregate information across an entire piece of text. First, it shows how many words of each color-coded category appear in the text. Second, GLTR illustrates the ratio between the probabilities of the top predicted word and the following word. Lastly, GLTR displays a histogram that reveals the distribution over the entropies of the predictions.

What are some practical uses of GLTR?

GLTR has numerous practical applications including detecting fake reviews, comments, and news articles that have been generated by large language models. It can help to identify texts that are indistinguishable from human-written text, assisting in the identification and scrutiny of information spread via digital channels.

Is the source code for GLTR available to the public?

Yes, the source code for GLTR is publicly available. It can be accessed and downloaded via Github, allowing for its widespread use and facilitating further development by the tech community.

How can GLTR be used to detect fake reviews or comments?

GLTR can detect fake reviews or comments by analyzing the likelihood of individual words within the text being generated by a language model. If a large proportion of the words appear to be likely choices for an AI-generated text, the review or comment may be considered artificially generated or fake.

What is the GPT-2 117M language model that GLTR uses?

The GPT-2 117M language model, utilized by GLTR, is a machine learning model developed by OpenAI. The model is trained to predict the next word in a given input context, allowing it to generate text one word at a time. Its predictions can even be influenced to some degree by human-provided input.

Can GLTR be used to differentiate between human-written text and machine-generated text?

Yes, GLTR is designed specifically to differentiate between human-written text and machine-generated text. It does this by analysing the likelihood of an AI model producing each word in a text, offering visual forensic analysis to highlight likely and unlikely model-generated words.

How successful is GLTR at identifying computer-generated content?

GLTR is quite successful at identifying computer-generated content. According to research data, the tool's statistical methods can detect generation artifacts across multiple sampling schemes, improving the human detection rate of fake text from 54% to 72% without any prior training.

What kind of visual evidence does GLTR provide?

GLTR provides visual evidence of artificially generated text through color coding each word according to the likelihood of it being generated by a model, and generating three histograms that aggregate data across the entire text. This visual footprint serves as an accessible representation of the likelihood that a text was artificially generated.

Can the average user access a live demo of GLTR?

Yes, the average user can access a live demo of GLTR. The demo is available on their website and it offers users firsthand experience of the tool's capabilities.

How is GLTR used in the field of natural language processing?

GLTR serves as a vital tool in the field of natural language processing, offering capabilities to detect automatically generated text. As models develop the potential to generate text indistinguishable from human-written content, GLTR provides an effective counter-measure by highlighting likely AI-generated phrases in the text.

How can researchers use GLTR and where can they learn more?

Researchers can employ GLTR to detect artificial text within their study materials. They can learn more about the detection tool's methodology and features through the ACL 2019 demo track paper, which was nominated for best demo and provides an in-depth look into the workings of GLTR.

What does it mean when GLTR highlights a word in purple?

When GLTR highlights a word in purple, it indicates that the word is unlikely to have been produced by an AI model. Purple words are essentially outliers, not fitting into the usual pattern predicted by the model.

Can GLTR be used to analyze news articles for authenticity?

Yes, GLTR can be used to analyze news articles for authenticity. By identifying words which would likely or unlikely have been generated by a language model, it offers a means to examine the authenticity of an article and potentially detect auto-generated text.

Is there a paper or research study available that delves into the creation and use of GLTR?

Yes, there is a paper titled 'GLTR: Statistical Detection and Visualization of Generated Text'. It is a research study that delves into the creation and use of GLTR, and it was presented at the 57th Annual Meeting of the Association for Computational Linguistics: System Demonstrations.

What does it mean if most of the words in a text analyzed by GLTR are highlighted in green or yellow?

If most of the words in a text analyzed by GLTR are highlighted in green or yellow, it is a strong indicator that the text was generated by an AI. These colors represent words that an AI model is likely to produce in a given context.

How does GLTR determine whether a word is likely to have been produced by a computer?

GLTR determines whether a word is likely to have been produced by a computer by analyzing the likelihood of a language model such as GPT-2 117M predicting that word in the given context. This likelihood forms the basis of a ranking system that colors the words according to their predicted chances of being AI-generated.

Can GLTR be used to detect all forms of artificially generated text or does it specialize in detecting specific types?

GLTR can be used to detect a wide range of artificially generated text. While it uses the output of the GPT-2 117M model from OpenAI for its analysis, its statistical methods are capable of detecting generation artifacts across different types of language models, making it a flexible tool for detecting a wide array of AI-generated content.

211

211 214

214 1

1