What is the main purpose of GPT-PROMPT-ENGINEER?

The main purpose of GPT-PROMPT-ENGINEER is to automate and enhance the process of prompt engineering. By generating, testing and ranking numerous prompts, GPT-PROMPT-ENGINEER assists users in finding the most effective prompts for a specific task. Essentially, they just input a task description and some test cases, and then GPT-PROMPT-ENGINEER does the heavy lifting.

What is prompt engineering?

Prompt engineering is a process of carefully crafting inputs (prompts) for language models to generate desired outputs. It can seem like an art, due to the experimentation required to uncover what prompts work best for certain purposes.

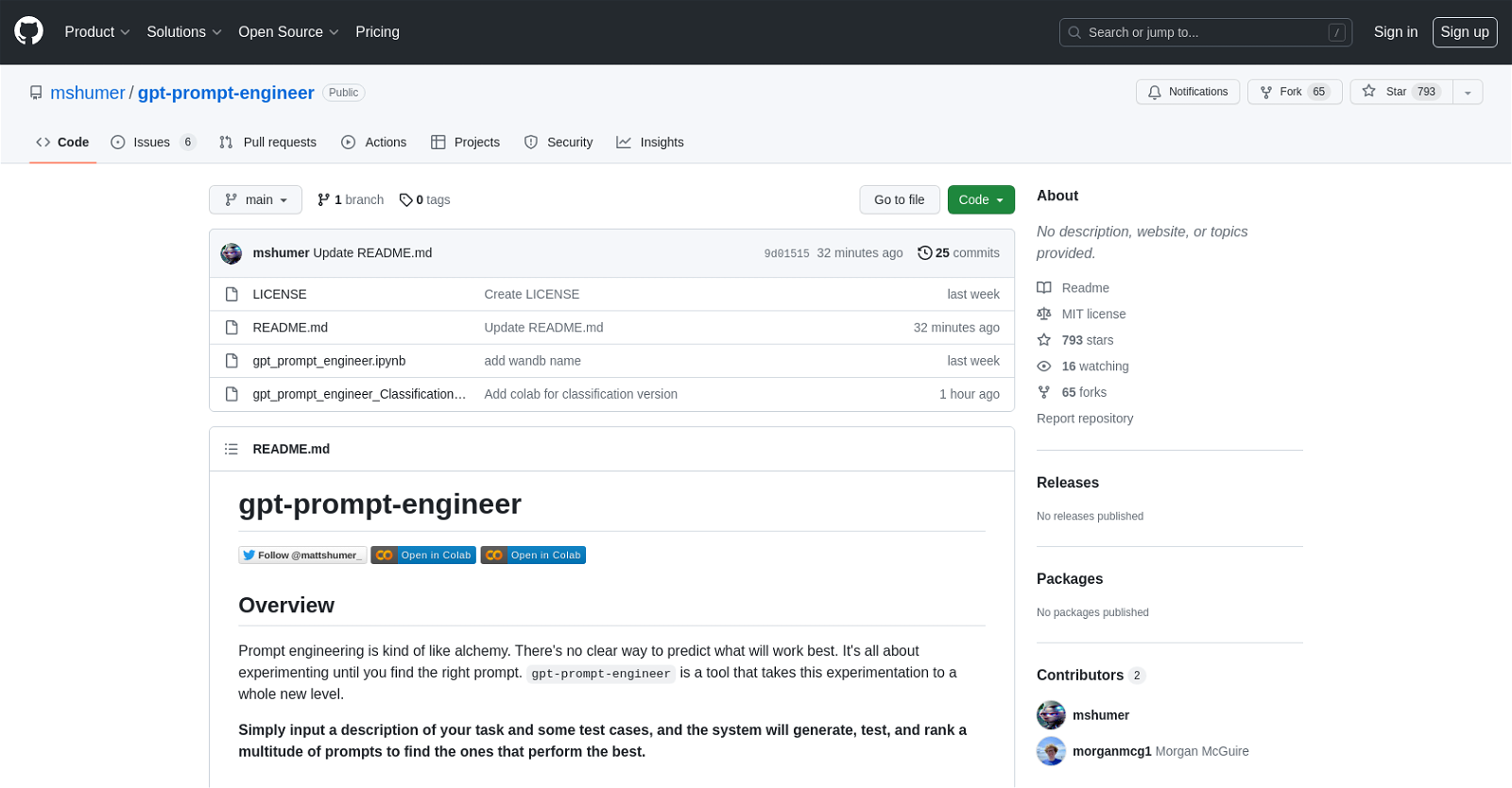

How does GPT-PROMPT-ENGINEER use GitHub?

GPT-PROMPT-ENGINEER utilizes GitHub for version control and collaboration. It can found publicly hosted on GitHub, marked by the open community with 793 stars and 65 forks, signifying its recognition and potential for collaboration. GitHub also gives users the ability to clone or download the GPT-PROMPT-ENGINEER repository, and read the README.md file for useful details. The project also seems to be maintained actively as shown by recent updates.

Why does GPT-PROMPT-ENGINEER use an ELO rating system?

The ELO rating system is integrated into GPT-PROMPT-ENGINEER to solve the problem of ranking the performance of the diverse prompts. Each prompt starts with an ELO rating, and as they compete against each other, the ratings change depending on their performance. This presents the users with a clear indicator of each prompt's effectiveness.

What is the maximum number of prompts that I can generate with GPT-PROMPT-ENGINEER?

The available information mentions that you can choose how many prompts to generate. However, it doesn't specify a maximum limit. Although note that generating many prompts can be expensive, and 10 is suggested as a good starting point.

How does GPT-PROMPT-ENGINEER test the generated prompts?

GPT-PROMPT-ENGINEER tests the generated prompts by comparing their performance against the test cases. Each prompt is used to generate responses to the different test cases, and their effectiveness is rated using the ELO rating system.

Does GPT-PROMPT-ENGINEER come with a version for classification tasks?

Yes, GPT-PROMPT-ENGINEER does include a version specifically designed for classification tasks. In this variation, GPT-PROMPT-ENGINEER evaluates the correctness of a test case by matching it to the expected output and provides a table with the scores for each prompt.

What are the main features of GPT-PROMPT-ENGINEER?

The main features of GPT-PROMPT-ENGINEER are: prompt generation using GPT models, testing of each prompt against all the test cases, ranking of the prompts using an ELO rating system, availability of a classification version and optional logging to Weights & Biases of the configs, system and user prompts for each part, test cases used and the final ranked ELO rating for each candidate prompt.

How can I get started with GPT-PROMPT-ENGINEER?

To get started with GPT-PROMPT-ENGINEER, you need to open the project in Google Colab or a local Jupyter notebook and add your OpenAI API key. You can then define your use-case and create relevant test cases. Finally, you call a function to generate, test, and rank a set of potential prompts.

Can GPT-PROMPT-ENGINEER log my configurations to Weights & Biases?

Yes, GPT-PROMPT-ENGINEER features optional logging to the Weights & Biases platform. However, this feature is currently available only in the main GPT-PROMPT-ENGINEER notebook.

How is the score calculated in the Classification Version of GPT-PROMPT-ENGINEER?

In the Classification Version of GPT-PROMPT-ENGINEER, the score is calculated by evaluating the correctness of a test case via matching it to the expected output. The table then provides scores for each prompt.

How can I contribute to the GPT-PROMPT-ENGINEER project?

Contributions to the GPT-PROMPT-ENGINEER project are welcomed. Certain ideas for enhancements include: different system prompt generators that create various styles of prompts, automatic generation of test cases and extending the classification version to support more than two classes using tiktoken.

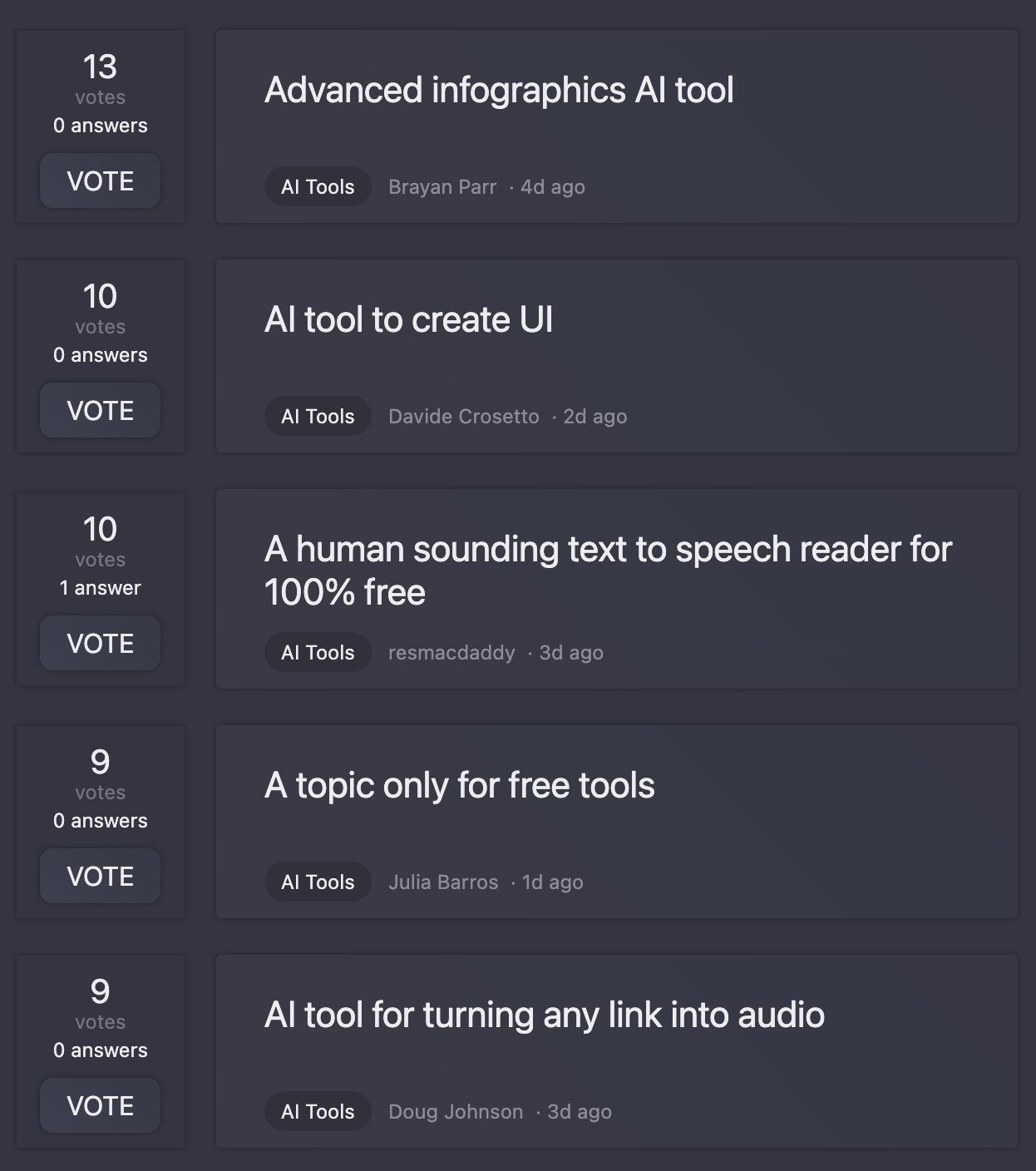

What similar tools to GPT-PROMPT-ENGINEER are there?

IDK

Is GPT-PROMPT-ENGINEER compatible with GPT-3, 3.5-turbo and 4?

GPT-PROMPT-ENGINEER is compatible with GPT-3, 3.5-turbo and GPT-4. For users without GPT-4 access, they can switch to using the GPT-3.5-turbo model.

Do I need to write a test case for each prompt in GPT-PROMPT-ENGINEER?

Yes, for GPT-PROMPT-ENGINEER to generate, test, and rate prompts, a user needs to define test cases for each formulated task or use-case.

Are there any resources or documentation available for GPT-PROMPT-ENGINEER?

The GPT-PROMPT-ENGINEER GitHub repository contains a comprehensive README.md file that explains all the necessary information, including its features, usage instructions, setup process and more. There is also a LICENSE file that lays out the terms of use for the tool.

How can I clone or download the GPT-PROMPT-ENGINEER repository?

To clone or download the GPT-PROMPT-ENGINEER repository, navigate to the repository's main page on GitHub. You can then choose to clone the repository using HTTPS, Github CLI or download the repository as a ZIP file. Instructions for each method are provided on the page.

What is the license for GPT-PROMPT-ENGINEER?

GPT-PROMPT-ENGINEER is licensed under the MIT license. The full license terms can be found in the LICENSE file in the repository.

What are the system requirements for running GPT-PROMPT-ENGINEER?

There is no specific system requirement information provided for GPT-PROMPT-ENGINEER. However, as it is a Jupyter notebook, it can be run in any environment that supports Jupyter notebooks such as Google Colab or a local Jupyter installation.

Are there any future updates or improvements planned for GPT-PROMPT-ENGINEER?

IDK