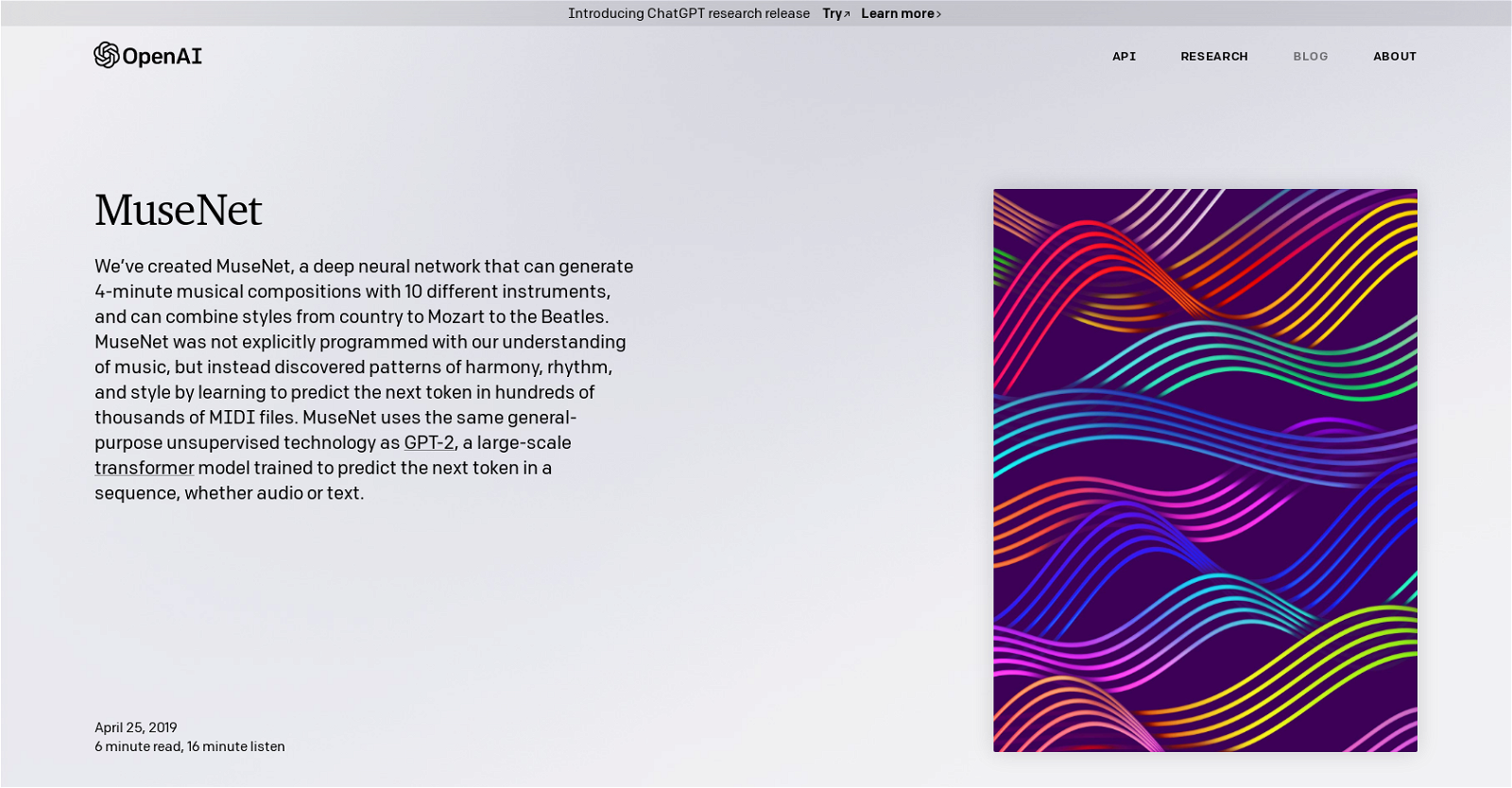

What is MuseNet?

MuseNet is a deep neural network developed by OpenAI that can generate four-minute musical compositions involving up to ten different instruments. It can compose music in a variety of styles including country, classical like Mozart, and pop band styles like the Beatles. The technology was not directly programmed with an understanding of music, but learned patterns of harmony, rhythm, and style by predicting the next token in hundreds of thousands of MIDI files.

How does MuseNet compose music?

MuseNet generates musical compositions by using a transformer model to predict the next note in a given sequence. During its training, it was asked to predict the upcoming note given a set of notes which enables it to compose new music. Its training incorporated composer and instrumentation tokens which influence the type of music being generated. For predictive accuracy and creativity, it utilizes a chordwise naming system which considers combinations of notes sounding at once as individual 'chords' and assigns tokens to each chord.

What genres can MuseNet create music in?

MuseNet can create music in a broad range of genres from classical and country to pop such as Mozart and the Beatles, blending styles in novel ways. The diverse capacities are a consequence of its training on a variety of MIDI files representing different musical genres.

In what formats does MuseNet generate the music?

IDK

How many different instruments can MuseNet utilize?

MuseNet can utilize up to 10 different instruments in a single composition. The specific instruments used can vary, influenced by the tokens provided during the generation phase.

What influences the style of music generated by MuseNet?

The style of music generated by MuseNet is influenced by a combination of factors including the composer and instrumentation tokens given during the generation phase. Additionally, musical style is affected by the broader dataset it was trained on which includes multiple genres such as classical archives and BitMidi, representing various styles and instruments.

What is the technology behind MuseNet?

MuseNet is based on the same general-purpose unsupervised technology as GPT-2, a large-scale transformer model. It uses this technology to predict the next token in a sequence, whether it be in an audio or text format. GPT-2 and MuseNet are both trained to predict the next token in a sequence, but MuseNet has additional capabilities for specific musical elements.

What does it mean that MuseNet uses a 'chordwise encoding'?

Chordwise encoding in MuseNet means that every combination of notes sounding at once is considered as an individual 'chord', and a specific token is assigned to each chord. This allows MuseNet to predict a new note based on the combination of notes that currently make up a chord.

How does MuseNet blend different musical styles?

MuseNet blends different musical styles by sequentially generating notes based on a mixed selection of style-specific tokens. For example, the first few set of notes could be in the style of Mozart and follow up notes could be in the style of the Beatles. Due to its machine learning nature, MuseNet is able to smoothly transition between these styles creating a unique blend.

What datasets were used to train MuseNet?

MuseNet was trained using a dataset collected from various sources such as Classical Archives and BitMidi. It also made use of the MAESTRO dataset. These datasets included MIDI files of many different music genres, providing a strong foundation for MuseNet's diverse generative capabilities.

How are the composer and instrumentation tokens used in MuseNet?

Composer and instrumentation tokens in MuseNet serve to give more control over the types of samples it generates. During training, these tokens are prepended to each sample so the model learns to use this information when predicting notes. At generation time, specifying these tokens helps to condition the model to create samples in a chosen style or with certain instruments.

What control do users have over the music generated by MuseNet?

Users have a degree of control over the music generated by MuseNet by supplying composer or style, the optional start of a famous piece, and then starting the generation. This allows for the exploration of different musical styles that MuseNet can create. In the advanced mode, users can interact with the model directly, allowing for the creation of entirely new pieces.

What are some limitations of MuseNet?

MuseNet has a few limitations, including unpredictability in instrument selection and difficulty in managing odd pairings of styles and instruments. Despite user input, it sometimes chooses unexpected instruments due to its inherent randomness and probabilistic nature. It also struggles with strange pairings, like generating a Chopin piece with bass and drums, as it's more inclined towards instruments typical to the specific genre or style.

How does MuseNet remember long-term structure in music?

MuseNet is equipped to remember long-term structure in music through the use of a Sparse Transformer model. This enables it to pay attention to a context of 4,096 tokens, allowing it to remember and replicate the long-term structures found within a musical piece.

What are the similarities and differences between MuseNet and GPT-2?

MuseNet shares similarities with GPT-2 as both are based on large-scale transformer models trained to predict the next token in a sequence. The differences lie in their specific applications, MuseNet is developed for music generation, whereas GPT-2 is designed for text generation. MuseNet incorporates additional musical tokens and takes into account melodic structures due to its specific musical focus.

What kind of musical structures can MuseNet create?

MuseNet can create various musical melodic structures, as seen in the samples imitating Chopin and Mozart on their website. The melodic structure it generates can depend on the composer and style tokens provided during the generation phase.

Can MuseNet generate music in the style of specific composers or bands?

Yes, MuseNet can generate music in the style of specific composers or bands. This is enabled by the use of composer and instrumentation tokens during the generation phase. The tokens equip the model with the necessary information to generate music replicating the style of specific artists like Chopin, Journey or the Beatles.

How does MuseNet handle copyright?

MuseNet maintains that it does not own the musical output and encourages users not to charge for the music created by it. However, MuseNet makes no guarantees that the music is free from external copyright claims, implying that music closely replicating copyrighted material could potentially infringe on existing copyrights.

Is it possible to interact and create music with MuseNet in real-time?

IDK

What is the purpose of structural embeddings in MuseNet?

Structural embeddings in MuseNet provides more context to the model. It helps the model track and understand the passage of time within the given sample, thereby assisting in synchronizing the notes that sound at the same time. It also offers insights about the place of a given musical sample within the larger musical piece.

1328

1328 1243

1243 795

795 54

54 1781

1781 15

15 1491

1491 14

14 13

13 1218

1218 12172

12172 11122

11122 11263

11263