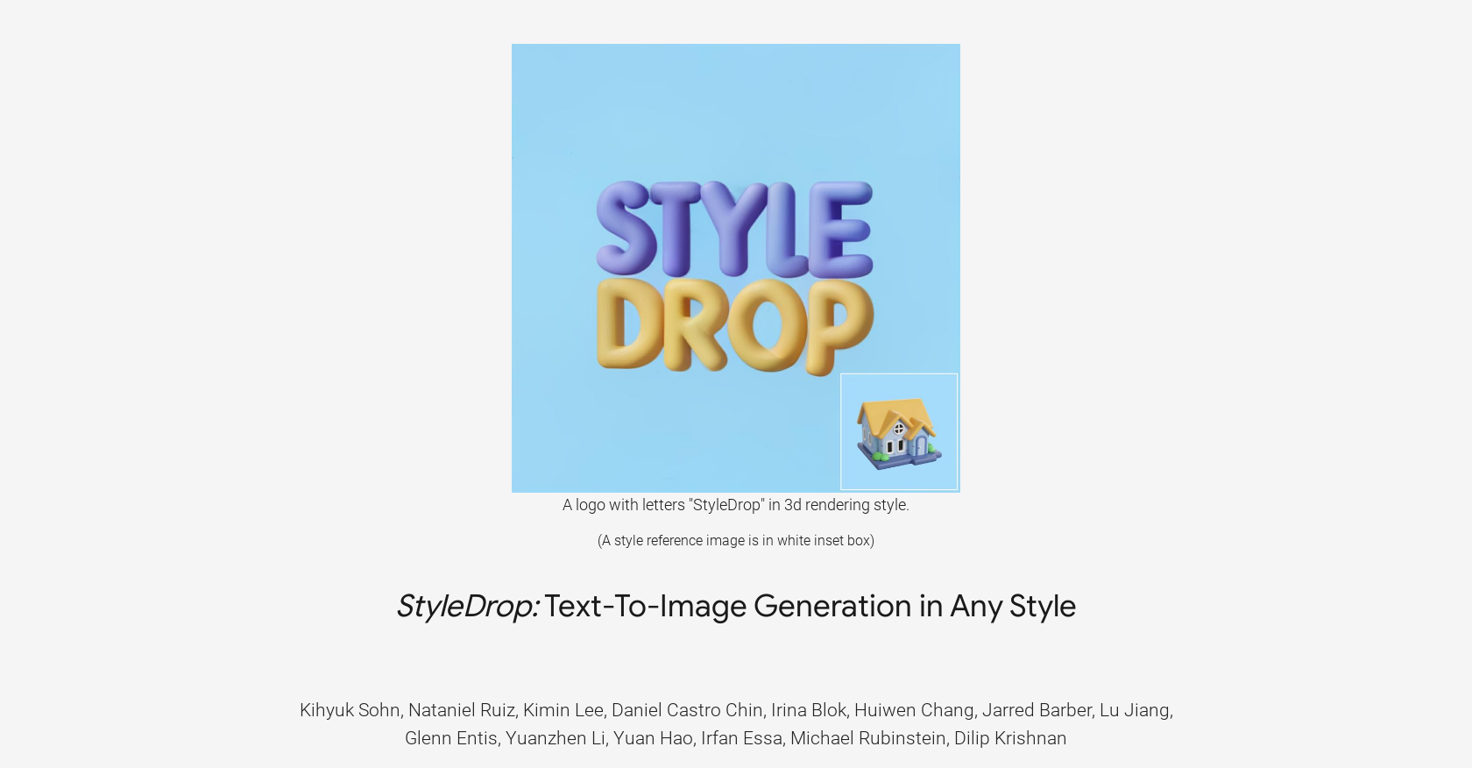

What is StyleDrop?

StyleDrop is an artificial intelligence tool that enables the generation of images in specific styles. It's powered by Muse, a text-to-image generative vision transformer, and is designed to capture nuances and details of a user-provided style, such as color schemes, shading, design patterns, and local and global effects. By fine-tuning a small number of trainable parameters, StyleDrop can improve image quality through iterative training, even when the user provides a single image as the style reference.

Who developed StyleDrop?

StyleDrop is developed by Google Research.

How does StyleDrop work?

StyleDrop works by fine-tuning a small number of trainable parameters - less than 1% of the total model parameters. This enables it to quickly learn and capture the nuances of a user-provided style, from color schemes to design patterns. It then further enhances the image quality through iterative training, a process which can generate impressive results even with a single image as the style reference.

What is the technology behind StyleDrop?

The technology behind StyleDrop is Muse, a text-to-image generative vision transformer. Muse is designed to generate high-quality images from text prompts, appending natural language style descriptors to content descriptors during both training and generation.

What are some key features of StyleDrop?

Some key features of StyleDrop include the ability to generate high-quality images from text prompts in any style described by a single reference image and the ability to train with user's own brand assets. StyleDrop also performs well in style-tuning text-to-image models, outperforming other methods like DreamBooth and Textual Inversion.

How does StyleDrop generate images in specific styles?

StyleDrop generates images in specific styles by using Muse, a text-to-image generative vision transformer. It fine-tunes less than 1% of the total model parameters, capturing the unique aspects of a provided style including colors, shading and patterns. By appending natural language style descriptors to content descriptors during both training and generation, the style can be translated effectively to the final image.

How does StyleDrop outperform other AI tools like DreamBooth and Textual Inversion?

StyleDrop outperforms AI tools like DreamBooth and Textual Inversion through its superior style-tuning performance. An extensive study demonstrated that for the task of style tuning text-to-image models, StyleDrop on Muse convincingly outperformed these other methods.

Can StyleDrop generate images from a single style reference?

Yes, StyleDrop can generate impressive results even when a user provides only a single image as the style reference. This single image is used as a style descriptor, which is appended to the content descriptors at both training and generation processes.

What is Muse and how does it enhance StyleDrop's capabilities?

Muse is a text-to-image generative vision transformer, which powers StyleDrop. It enhances StyleDrop's capabilities by generating high-quality images from text prompts, appending natural language style descriptors to content descriptors during both training and generation stages. This allows StyleDrop to capture the nuances of a user-provided style effectively.

How does StyleDrop's iterative training work?

StyleDrop's iterative training works by consistently improving the quality of generated images. This is accomplished by fine-tuning a very small amount of the model parameters, less than 1% of the total, allowing StyleDrop to efficiently learn new styles and enhance quality with either human or automated feedback.

Can StyleDrop collaborate and train with brand assets?

Yes, StyleDrop is designed to collaborate and train with user's own brand assets. This feature makes it easy for users to prototype ideas in their own unique style.

Can StyleDrop and DreamBooth be used together?

Yes, StyleDrop and DreamBooth can be used together. They can be combined to generate an image of a subject in any chosen style. Users are able to customize the style and subject based on their preference, gaining more control over the image generation process.

What is the performance of StyleDrop on Muse as compared to other models like Imagen and Stable Diffusion?

StyleDrop on Muse convincingly outperforms in style-tuning over existing methods based on diffusion models such as Imagen and Stable Diffusion. As a discrete-token based vision transformer, Muse leverages StyleDrop's capabilities to excel in the style-tuning of images, yielding superior quality outputs.

What sort of images can StyleDrop generate?

StyleDrop can generate a wide variety of images in many different styles. It can follow descriptions given in natural language to create styles ranging from 3D rendering and oil painting, to cartoon illustration and abstract rainbow colored flowing smoke wave design. It's versatile and effective in producing images that faithfully follow a specific style.

How does StyleDrop handle alphabets and style consistency?

StyleDrop processes alphabets with a consistent style described by a single reference image. The AI tool is able to generate images of alphabets while maintaining consistency in the specific style fed into it during the training and generation process.

Does StyleDrop need multiple images for style references?

No, StyleDrop doesn't need multiple images for style references. It's capable of generating high-quality images based on a single style reference provided by the user.

How are image owners acknowledged in StyleDrop?

In StyleDrop, image owners are acknowledged by giving them credit for their images. The owners of the images are thanked for sharing their valuable assets and links to the image assets used in the experiments are provided.

What steps does StyleDrop take to generate high-quality images?

StyleDrop generates high-quality images through a process that involves fine-tuning a small number of trainable parameters - less than 1% of the total model parameters. It uses Muse, a text-to-image generative vision transformer, to generate the images from text prompts. It then further enhances the image quality through iterative training, even when a single image is provided as the style reference.

Can StyleDrop capture both local and global effects in an image?

Yes, StyleDrop is designed to capture both local and global effects in an image. Its technique involves capturing nuanced details of a user-provided style, including color schemes, shading, design patterns, and both local and global effects.

How does StyleDrop manage to train with less than 1% of total model parameters?

StyleDrop manages to train with less than 1% of total model parameters through efficiency and focused fine-tuning. By targeting a minimal set of trainable parameters, StyleDrop can capture the nuances and details of a user-provided style, improving the image quality through iterative training without the need for excessive computational resources.

931

931 611

611 613

613 496

496