What is Lumiere developed by Google Research?

Lumiere is a state-of-the-art space-time diffusion model created by Google Research. It is designed specifically for video generation, synthesizing videos that depict realistic, diverse, and coherent motion. It offers three key functionalities: Text-to-Video, Image-to-Video, and Stylized Generation. Lumiere is uniquely equipped with a Space-Time U-Net architecture, allowing it to generate entire videos in one pass, maintaining temporal consistency throughout.

What is the purpose of the Space-Time diffusion model in Lumiere?

The purpose of the space-time diffusion model in Lumiere is to generate videos that represent realistic, diverse, and coherent motion. This model focuses on creating videos from either text or image inputs and stylizing them with a unique style based on a single reference image, providing dynamic and interpretative visual content.

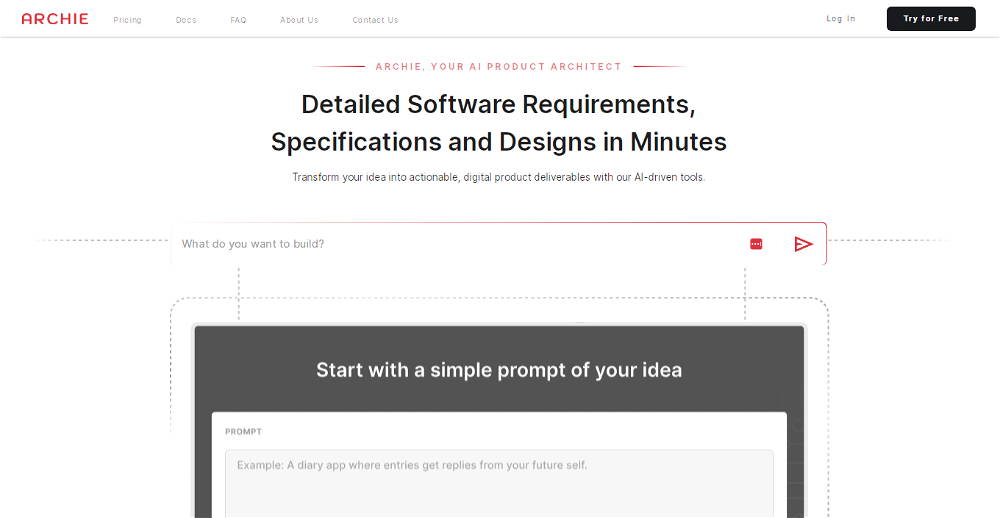

How does Lumiere's Text-to-Video feature work?

Lumiere's Text-to-Video feature works by using provided text inputs or prompts to generate videos. These inputs serve as the basis for the narrative or content of the video, with Lumiere creating a dynamic visual interpretation of the text.

What is Lumiere's Image-to-Video feature?

Lumiere's Image-to-Video feature takes an input image and uses it as a starting point for generating a video. Essentially, this feature brings static images to life by creating a dynamically moving video sequence that begins from the input image.

Can you explain Lumiere's Stylized Generation capability?

Lumiere's Stylized Generation capability enables the creation of uniquely styled videos using a single reference image. The reference image determines the style, and Lumiere applies this style to the generated video, resulting in distinctly stylized content. This is achieved by using fine-tuned text-to-image model weights.

How is Lumiere's video generation process different from other video models?

Unlike many existing video models that first create keyframes and then execute temporal super-resolution, Lumiere generates an entire video in a single pass. This approach eliminates temporal pitfalls that can result from interpolation between keyframes, thereby ensuring global temporal consistency in the video.

What is the range of Lumiere's application?

Lumiere can be applied to generate various scenes and subjects, such as animals, nature scenes, objects, and people. This extends to imagining these subjects in novel and fantastical situations. Its applications are vast and can be adapted as per content requirements in numerous industries and circumstances.

What is the potential use of Lumiere in the field of entertainment and gaming?

In entertainment and gaming, Lumiere could be used to generate realistic visual content for games, virtual reality experiences, and promotional videos. It could take text or image inputs and create dynamic visual content that enhances user experience by offering coherent, stylized, and engaging narratives.

What is the meaning of temporal consistency in relation to Lumiere?

Temporal consistency in relation to Lumiere refers to the maintenance of logical and smooth transitions throughout the video generation process. It ensures that the generated videos have uniformity and continuity in their motion dynamics over time.

What does Lumiere's Space-Time U-Net architecture do?

Lumiere's Space-Time U-Net architecture allows it to generate an entire video in one pass. This architecture enables the model to process multiple space-time scales, generate full-frame-rate, low-resolution video in a single pass, and maintain temporal consistency, resulting in improved quality and coherence of the video output.

How can Lumiere handle different scenes and subjects?

Lumiere can adeptly handle a wide variety of scenes and subjects. It can animate both general and specific themes ranging from animals, nature scenes, human figures, to objects. It creates unique situations and narratives, often presenting them in novel or fantastical ways and generating diverse, realistic and moving videos.

What is Lumiere's role in dynamic and responsive visual content creation?

Lumiere plays a significant role in dynamic and responsive visual content creation. It processes text inputs or image inputs to launch creative processes that result in videos depicting realistic, various, and consistent motion. Lumiere dynamically interprets the input and generates a video, offering flexibility and variety in visual content creation.

What are some example prompts for Lumiere's Text-to-Video feature?

Examples of prompts for Lumiere's Text-to-Video feature could include phrases like 'A young hiker standing on mountain peak at sunrise', 'Aurora Borealis over winter mountain ridges', 'Astronaut on the planet Mars', 'A dog driving a car on a suburban street wearing funny sunglasses' etc. These prompts are interpreted by Lumiere to generate a corresponding video.

How does Lumiere use a single reference image for Stylized Generation?

In Lumiere's Stylized Generation feature, a single reference image is used to determine the overall style of the generated video. Lumiere extracts the artistic attributes of the reference image, allowing the model to replicate and apply those attributes across the entire video, effectively imbuing it with the style of the reference image.

What is the role of fine-tuned text-to-image model weights in Lumiere?

The fine-tuned text-to-image model weights in Lumiere play a crucial role in creating videos in the target style by adjusting the level of influence of certain stylistic characteristics in the generated video. They enable Lumiere to apply the unique styling derived from a single reference image across the duration of the video.

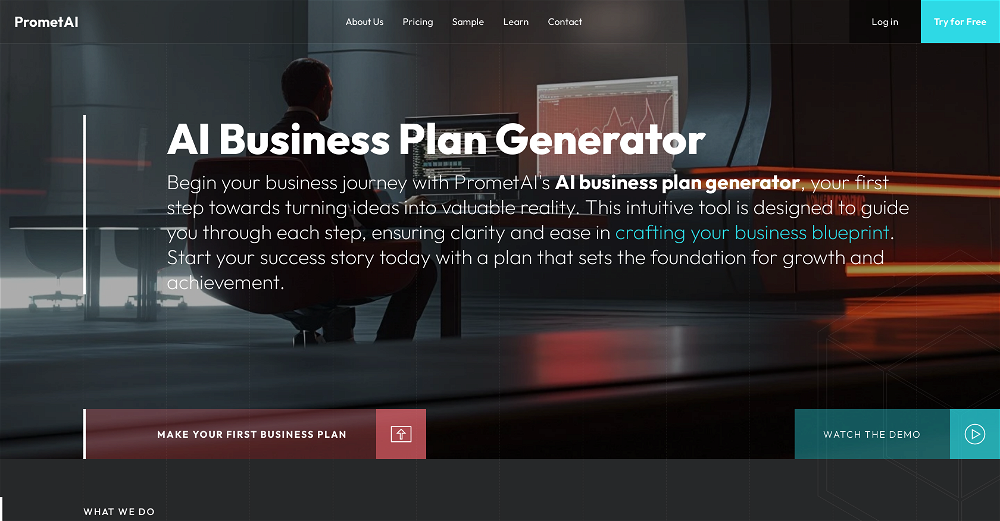

What are some potential applications of Lumiere in virtual reality and advertising?

In contexts like virtual reality and advertising, Lumiere could be used to create interactive and immersive experiences. For virtual reality, Lumiere can generate realistic and dynamic video content based on user input. In advertising, companies could use Lumiere to create custom, stylized video content that reflects their brand narrative and engages their target audience more effectively.

Can you explain how Lumiere achieves global temporal consistency?

Instead of synthesizing distant keyframes followed by temporal super-resolution, Lumiere utilizes a Space-Time U-Net architecture that generates the entire temporal duration of the video at once. This allows Lumiere to directly generate a full-frame-rate, low-resolution video by processing it in multiple space-time scales, enabling the model to maintain global temporal consistency.

How can Lumiere animate the content of an image?

Lumiere's cinemagraph feature enables it to animate specific regions of a single image, while leaving the rest static. This is done by indicating the desired region to animate using a mask, and Lumiere applies motion to that selected area in the output video.

What does the video inpainting feature in Lumiere mean?

Video inpainting in Lumiere involves the reconstruction of missing or removed parts of a video. If parts of the source footage are masked (hidden), Lumiere can predict and fill in the masked parts with plausibly looking content, potentially recovering the original flow and movement of the video.

How are off-the-shelf text-based image editing methods used for consistent video editing in Lumiere?

In Lumiere's video stylization, off-the-shelf text-based image editing methods can be extended to video editing, which allows for consistent editing across multiple video frames. With this approach, Lumiere maintains artistic style and thematic unity across the duration of the entire video.

3884

3884 188

188 62

62 48698

48698 29

29 127K

127K 6

6 6

6 4

4 3146

3146