What is the Kmeans ChatGPT Web platform?

Kmeans ChatGPT Web platform is a tool that generates text based on user prompts. Its functionalities include chat, Q&A, and web interaction.

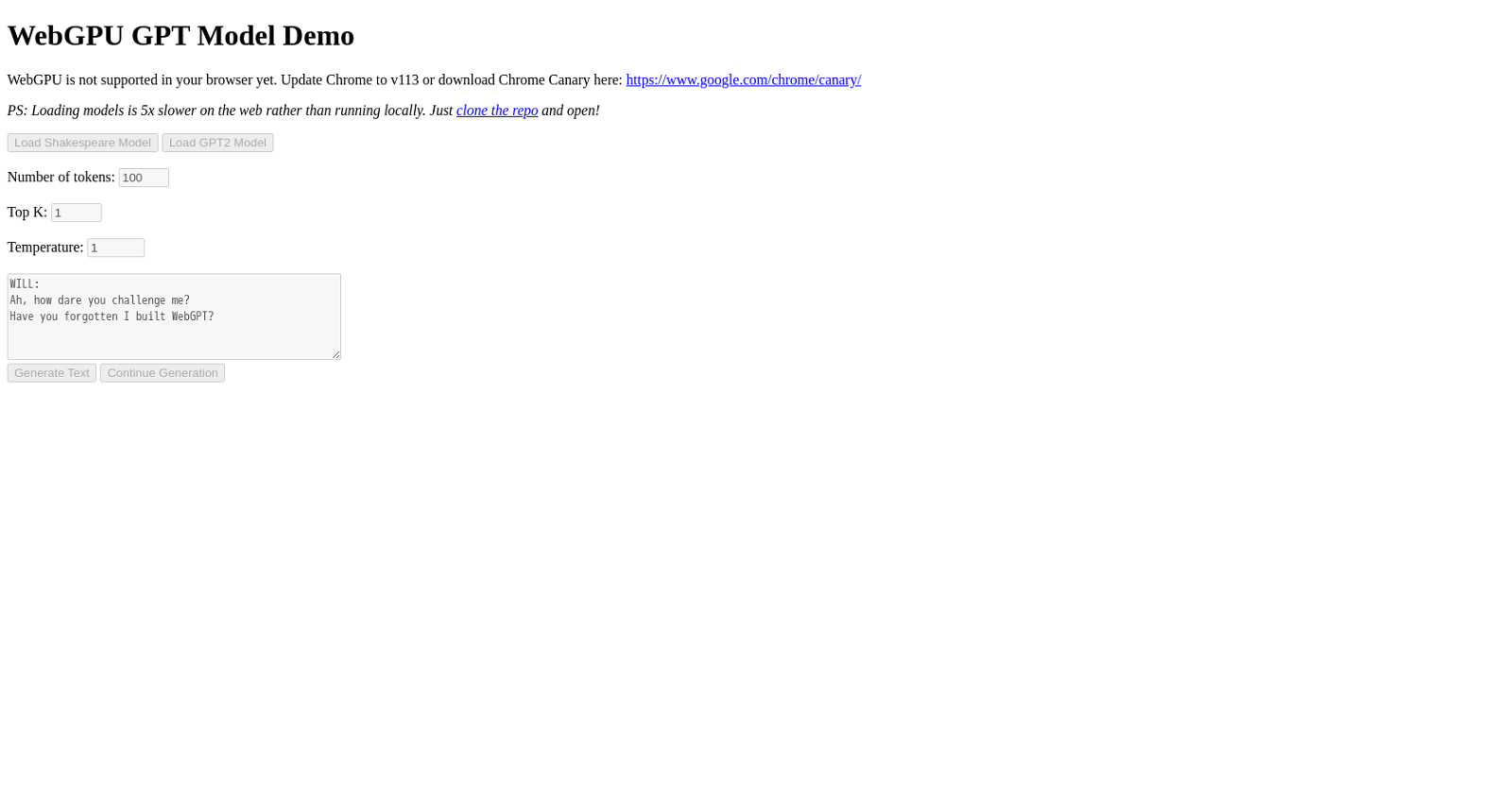

What is the purpose of the WebGPU GPT Model Demo?

The primary purpose of the WebGPU GPT Model Demo is to showcase the capabilities of the GPT model in generating text. It allows users to load different models, enter a prompt, and produce text based on user inputs while using the WebGPU technology.

What types of models can be loaded on the WebGPU GPT Model Demo?

The WebGPU GPT Model Demo allows for the loading of various models. The examples given on their website include the Shakespeare Model and the GPT2 Model.

How does the WebGPU GPT Model Demo use user input?

In the WebGPU GPT Model Demo, user input is utilized in the form of prompts or starting sentences. The GPT model generates text based on these inputs, creating a continual stream of text that develops the initial user-provided prompt.

Do I need a specific browser for the WebGPU GPT Model Demo to work?

Yes, you need a specific browser for the WebGPU GPT Model Demo. It requires an up-to-date version of Google Chrome to function properly. Users also have the option to use Chrome Canary to support WebGPU.

Can the generated text be continued for further development?

Yes, in the WebGPU GPT Model Demo, the generated text can be continued for further development based on user instruction.

What settings can be adjusted in the WebGPU GPT Model Demo?

In the WebGPU GPT Model Demo, users can adjust several settings which include the number of tokens, top K, and temperature.

Why is the WebGPU GPT Model Demo slower on the web than running locally?

WebGPU GPT Model Demo is slower on the web than running locally due to the additional time it takes to load models over an internet connection.

How can I speed up the process of using WebGPU GPT Model Demo?

To speed up the process of using the WebGPU GPT Model Demo, it is recommended to clone the repository and run the models on a local machine.

Who is the WebGPU GPT Model Demo intended for?

The WebGPU GPT Model Demo is intended primarily for developers and enthusiasts interested in exploring and experimenting with the GPT model's text generation capabilities.

What is the temperature setting on the WebGPU GPT Model Demo?

In the context of the WebGPU GPT Model Demo, the 'temperature' setting controls the randomness of the text generation. A higher temperature value results in more randomness and vice versa.

Can I use the WebGPU GPT Model Demo without Google Chrome?

No, the WebGPU GPT Model Demo does not work without Google Chrome. It explicitly requires an up-to-date version of Google Chrome or the user can choose to run it on Chrome Canary for optimal performance.

Why does the WebGPU GPT Model Demo require updating Chrome to a specific version?

The WebGPU GPT Model Demo requires updating Chrome to a specific version to support the WebGPU technology that it utilizes for its working.

What is the significance of 'top k' in the WebGPU GPT Model Demo?

'Top k' in the WebGPU GPT Model Demo refers to a setting influencing the text generation process. It controls the number of top possibilities the model considers while predicting the next word in a sentence.

What's the difference between Shakespeare model and GPT2 Model?

The Shakespeare Model and the GPT2 Model are different pre-trained models available in the WebGPU GPT Model Demo. The key difference would lie in their training data and thus the style of the generated text. The Shakespeare Model likely generates text in a style resembling Shakespeare's works, whereas the GPT2 Model would produce more general text.

How to clone the repository for running the models locally?

To clone the repository for running the models locally, users can follow the provided link on the website to the GitHub repository and use the 'Clone' command along with the repository's URL on their local command line interface.

Does the WebGPU GPT Model Demo support languages other than English?

IDK

Is there a limit to the number of tokens that can be used in WebGPU GPT Model Demo?

IDK

Why does loading models take longer in WebGPU GPT Model Demo?

Loading models takes longer in the WebGPU GPT Model Demo because it pulls the models over the internet. This transfer over the internet is slower compared to accessing them from a local storage.

Are there any alternatives if WebGPU is not supported in my browser?

Yes, if WebGPU is not supported in your browser, the suggested alternative is to download and use Chrome Canary, which does support this feature.

94

94 70

70 51

51 49

49 25

25 23

23 13

13 12

12