Embedstore

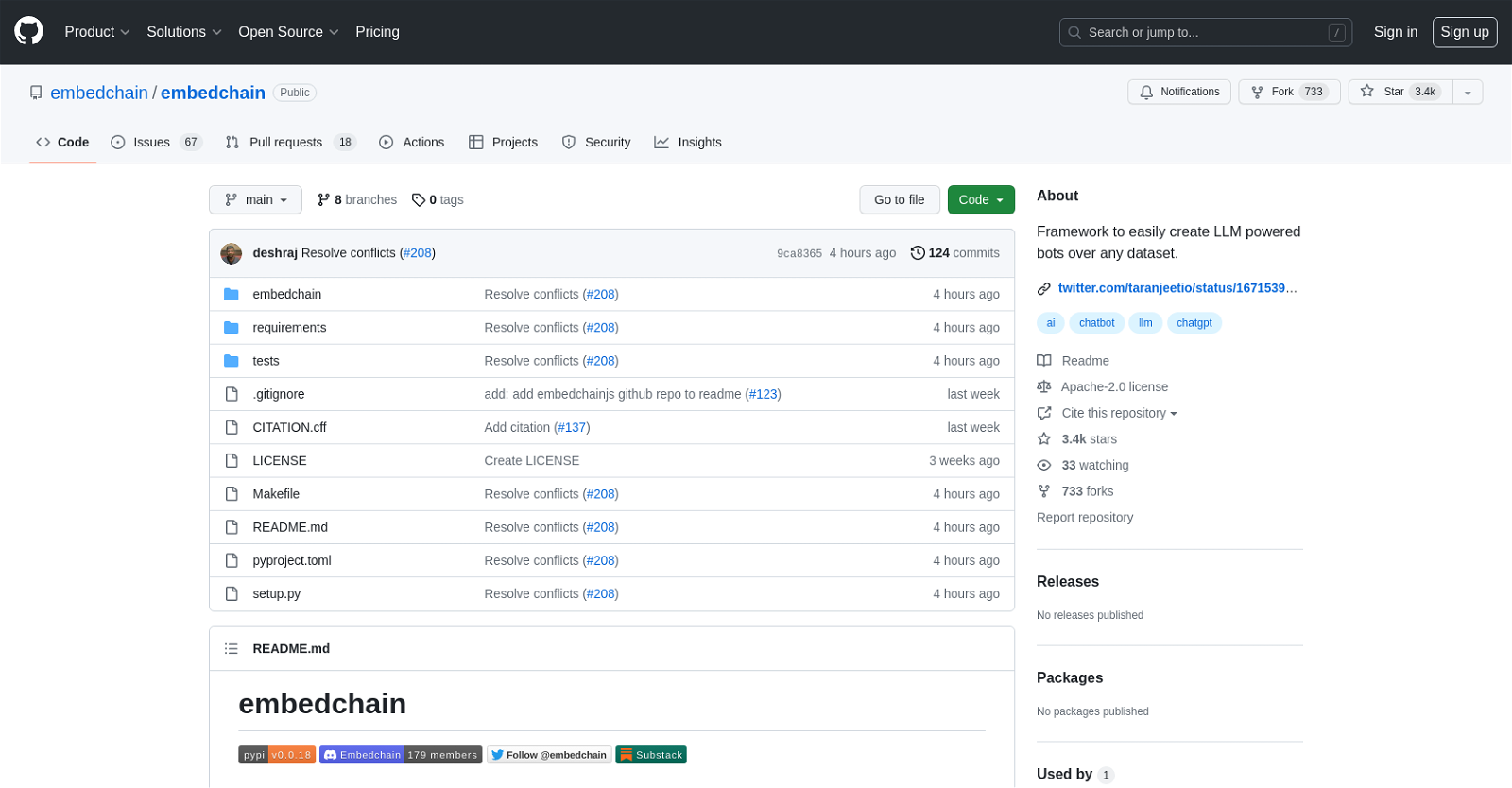

The GitHub tool "embedchain/embedchain" is a framework that allows users to easily create bots powered by Large Language Model (LLM) technology over any dataset.

It provides a set of functionalities that facilitate the creation and implementation of these bots. The tool is hosted on the GitHub platform, which offers various features including automation of workflows, package hosting and management, vulnerability detection and correction, instant development environments, AI-powered code writing assistance, code review management, work planning and tracking, and collaboration outside of code.With "embedchain/embedchain," users can explore all the features and documentation provided by GitHub.

They can also access GitHub Skills and the GitHub Blog for additional resources and information. The tool is designed to be useful for enterprises, teams, startups, and educational purposes.

It supports CI/CD and Automation, as well as DevOps and DevSecOps solutions. Users can also find case studies, customer stories, and resources related to Open Source.The tool has gained popularity, with 3.4k stars and 733 forks on GitHub.

It is licensed under the Apache-2.0 license. Users can interact with the code, raise issues, submit pull requests, and utilize various actions and security features offered by GitHub.Overall, "embedchain/embedchain" is a framework that simplifies the creation of LLM-powered bots over any dataset, offering a range of features and integrations with the GitHub platform to streamline the development and deployment process.

Would you recommend Embedstore?

Help other people by letting them know if this AI was useful.

Feature requests

200 alternatives to Embedstore for Chatbots

-

4.35273

-

5.0206

-

5.01792

-

5.01581

-

139

-

1111

-

5.01111

-

3.51021

-

3.91025

-

5.0581

-

1.8542

-

5.051

-

40

-

40

-

38

-

5.036

-

5.0351

-

5.033

-

1.033

-

5.032

-

32

-

27

27 -

5.0261

-

5.0253

-

5.024

-

23

-

23

-

5.0231

-

5.0212

-

5.0211

-

20

-

19

-

2.0181

-

18

-

5.018

-

18

-

18

-

17

-

171

-

17

-

5.01711

-

16

-

16

-

15

-

15

-

14

-

14

-

13

-

13

-

5.0131

-

13

-

12

-

11

-

11

-

11

-

11

-

11

-

10

-

10

-

10

-

5.010

-

4.71010

-

10

-

9

-

9

-

9

-

9

-

9

-

9

-

2.091

-

5.09

-

9

-

9

-

8

-

8

-

71

-

7

-

7

-

7

-

7

-

7

-

7

-

7

-

7

-

5.06

-

6

-

6

-

6

-

6

-

6

-

6

-

5.064

-

6

-

5

-

5

-

5.05

-

5

-

5

-

5.054

-

5

-

5

-

Discover Charlie Lounge, the ultimate AI hub unifying various AI modules, chat bots, and marketplaces into one platform.5

-

5

-

5

-

4

-

4

-

4

-

4

-

4

-

5.041

-

4

-

4

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

3

-

2

-

2

-

2

-

2

-

2

-

2

-

2

-

2

-

2

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1

-

1166

1166 -

5.012

-

1

-

1

-

1

-

1

-

1

-

1

-

-

-

-

-

19

-

-

Pros and Cons

Pros

Cons

Q&A

If you liked Embedstore

Featured matches

Other matches

-

3.31971

-

4.839612

-

1.0551

-

130

-

38

-

1.028

-

5.0251

-

2.51494

-

4.21344

-

75

-

1.060

-

5.0541

-

27

-

3.0397

-

3.18474

-

5.095

-

23

-

37

-

32

-

15

-

14

-

1

-

9

-

1.09151

-

25

-

9

-

1.012

-

1.0181

-

7

-

4.947013

-

18

-

9

-

4

-

72

-

5.040

-

4

-

1.0231

-

7

-

10

-

5.066

-

16

-

8

-

4.0146

-

4.35273

-

1.011

-

16

-

5

-

471

-

5.033

-

4.53056

-

7

-

5

-

17

-

20

-

5.0821

-

2

-

36

-

5

-

5

-

5.017

-

3

-

3.7469

-

1

-

2

-

3

-

7

-

16

-

210

-

15

-

42

-

120

-

168

-

1

-

2

-

1

-

139

-

21

-

195

-

7686

-

5.0320

-

15

-

2465

-

48

-

29

-

19

-

45

-

5

-

32

-

10

-

144

-

27

-

20

-

312

-

12

-

12

-

14

-

33

-

17

-

233

-

4

-

13

-

3

-

5

-

5

-

248

-

56

-

33

-

1

-

226

-

155

-

35

-

38

-

11

-

160

-

27

-

1144

-

20

-

3

-

85

-

16

-

3

-

1

-

116

-

5.01

-

222

-

7

-

128

-

100

-

42

-

40

-

62

-

266

-

113

-

5.0136

-

11

-

2

-

7

-

130

-

37

-

31

-

411

-

213

-

10

-

27

-

6

-

75

-

116

-

14

-

15

-

8

-

42

-

6

-

62

-

143

-

8

-

1250

-

30

-

44

-

111

-

2

-

20

-

4

-

100

-

10

-

2

-

7

-

1

-

5.06

-

3

-

15

-

5.0142

-

1

-

1

-

3

-

13

-

41

-

7

-

3

-

29

-

6

-

6

-

5.050

-

4.5681

-

5.08414

-

1

-

28

-

1.0161

-

7

-

2.01052

-

21

-

2

-

14

-

4

-

1

-

5.047

Help

To prevent spam, some actions require being signed in. It's free and takes a few seconds.

Sign in with Google